Latest Blog

What to Include in an AI Interviewer RFP: A Buyer's Guide

Researcher

•

5 min read

Share this post

What to Include in an AI Interviewer RFP: A Buyer's Guide

Most AI interviewer RFPs are too easy on the vendor.

They ask whether the platform has AI.

Whether it supports video.

Whether it integrates with the ATS.

Whether the dashboard looks polished.

Those questions are fine for a first meeting.

They are not enough to protect you from a bad decision.

Because AI interviewing tools usually do not fail in the demo.

They fail later.

They fail when completion rates are weaker than expected because the experience asked too much of the wrong audience.

They fail when recruiters do not trust the score.

They fail when legal asks how accommodations work.

They fail when procurement learns the "integration" is really a one-way file drop.

They fail when one part of the business wants a different workflow and discovers the product is only configurable if the vendor changes it for them.

That is the frame smart buyers should use.

An AI interviewer is not just another piece of recruiting software. It sits inside selection, compliance, accessibility, and data governance. The EEOC and DOJ have explicitly warned employers that algorithmic decision tools can trigger accommodation obligations under the ADA. New York City's AEDT law requires a bias audit before use for covered tools, plus notices and public disclosures. NIST's AI Risk Management Framework centers accountability, transparency, documentation, and ongoing monitoring as core traits of trustworthy AI systems.

So the job of an AI interviewer RFP is not to ask, "Does this look innovative?"

It is to ask, "Will this still look responsible after six months of real hiring?"

That is a much better buying question.

1. Start with the candidate, not the demo

One of the most common mistakes in this category is assuming every candidate should go through the same interview motion.

That sounds efficient.

It usually is not.

For many hourly, frontline, blue-collar, and gray-collar roles, a real phone call can be the better starting point. Not because phone is always superior. Because it removes friction.

A candidate does not need to click a link.

They do not need to remember a password.

They do not need to grant camera permissions.

They do not need to find the right device at the right moment.

They just need to answer the phone.

That matters more than a lot of buying teams realize. Pew Research has shown that smartphone dependence is especially common among lower-income Americans. That does not prove every hourly worker wants a phone interview. It does show why link-heavy workflows create more failure points than many white-collar evaluation teams assume.

There is also a plain operational truth here. A scheduled phone interview is easier to complete in the flow of real life. A candidate can take it between shifts, outside work, while running errands, or in a setting where opening a laptop would be awkward or unrealistic. That is one reason phone-based interviewing often makes more sense for labor pools where convenience drives completion.

Video still matters.

For many professional, corporate, and IT roles, video is the better fit. Those candidates are often more likely to be at a desktop, more tolerant of a slightly more involved flow, and more likely to be in jobs where visual review helps. Video can also support stronger identity assurance and make some forms of coaching or suspicious behavior easier to review.

So the real RFP question is not "Do you support phone and video?"

It is this:

Can we use phone where friction kills completion, and video where richer review matters more?

That is the kind of question that separates workflow fit from feature theater.

What to ask vendors

Do you support true phone-call interviewing, not just a mobile web link?

Can interview type vary by role, business unit, geography, or stage?

Can interview length vary by role?

Can the same platform support phone for hourly hiring and video for professional hiring without separate implementations?

2. If the team cannot edit the process, it does not really own the process

A lot of vendors now show the same move in the demo.

"Upload the job description and we'll generate the interview."

That is useful.

It is not the real test.

The real test is what happens next.

Can your team rewrite the questions?

Can they shorten the flow?

Can they change the scorecard?

Can one division use a different rubric from another?

Can they do all that without filing a ticket and waiting on services?

Because if they cannot, then the product is not really a platform.

It is a black box with a setup wizard.

That matters because hiring processes are not static. Roles change. Hiring managers change. Business units want different tradeoffs. Legal wants wording updated. Operations wants shorter screens for one role family and deeper screens for another. If the product cannot flex with those realities, the software ends up dictating the process instead of supporting it.

That is especially important in employment screening. The EEOC's guidance on tests and selection procedures makes clear that employers need to think about whether selection tools are job-related and consistent with business necessity.

In practice, that means buyers should be able to answer simple questions with confidence:

Why is this question here?

Why is this competency weighted this way?

Why is this workflow right for this role?

If the only answer is "that is how the vendor does it," that is a weak position.

What to ask vendors

Can admins and recruiters edit questions directly?

Can the platform auto-generate a draft from the job description?

Are templates reusable across job families?

Can scorecards vary by role, department, or geography?

Is there version control when questions or scoring logic change?

3. Scoring is where trust is won or lost

This is the moment that tells you whether the product will actually be used.

A score can look smart in a dashboard and still fail in the field.

Why?

Because recruiters do not trust mystery scores.

Legal does not trust mystery scores.

Procurement should not trust mystery scores either.

A strong AI interviewer should not just output a number. It should make the evaluation legible. The rubric should be visible. The criteria should make sense. The employer should be able to adjust weighting. Recruiters should be able to override the result and leave context. Changes should be logged.

That is not bureaucracy.

That is what makes the system usable.

NIST's AI RMF is useful here because it frames trustworthy AI around qualities like accountability, transparency, explainability, and managed bias. That is exactly the right lens for AI interviewing. If the tool influences who advances, the buyer needs to understand how the logic works and how changes are governed over time.

The easiest way to stress-test this in an RFP is to ask the vendor to show the score, the rubric behind the score, and the audit trail when someone changes the rubric.

That demo is much more revealing than a glossy dashboard tour.

What to ask vendors

What competencies are being scored?

Can we inspect the rubric behind the score?

Can weighting change by role?

Can recruiters override the result and leave notes?

Can we see which version of the interview and scoring logic was active when a candidate completed it?

4. ATS integration should mean workflow depth, not a PDF attachment

"Integrates with your ATS" is one of the most overused phrases in recruiting tech.

Sometimes it means true bidirectional sync.

Sometimes it means the system uploads a file and calls it a day.

Those are not remotely the same.

A useful AI interviewer should behave like part of the hiring workflow, not a sidecar. Candidate stages should update in both directions. Notes should write back as usable text. Status changes should stay aligned. Recruiter actions in the ATS should be able to affect what happens next in the interview flow.

Why does this matter so much?

Because if recruiters have to reconcile two systems by hand, trust collapses fast. The team stops believing the workflow is real. The ATS becomes messy. Reporting gets weaker. Adoption drops.

This is one of the most expensive misunderstandings in the category because it often looks "close enough" in a demo and becomes painful only after launch.

What to ask vendors

Which fields sync both ways?

Do stage changes update in both directions?

Do notes write back as free text?

Do statuses and dispositions stay aligned?

What happens when the ATS and interview platform conflict?

Can admins control mappings and sync logic?

5. Accommodation handling should live inside the workflow, not in a support article

This is where mature buyers separate themselves from the rest.

Candidates will request accommodations.

Some will need more time.

Some will need a different modality.

Some will want an alternate path.

Some will want to opt out of the default AI flow.

That is not an edge case.

That is part of the product requirement.

The EEOC and DOJ have already said employers should have a process in place to provide reasonable accommodations when using algorithmic decision-making tools, and warned that workers with disabilities can be screened out without proper safeguards.

That should change how buyers write the RFP.

Do not ask, "Are you accessible?"

Ask, "How does it actually work when the candidate needs something different?"

A serious platform should let candidates request an accommodation, route them into another path, preserve the record, and keep the workflow intact. If that process breaks the candidate journey or the ATS sync, the burden moves back onto the employer.

That is not real product maturity.

That is outsourced risk.

What to ask vendors

How does a candidate request an accommodation?

Can candidates be routed into alternate interview paths?

Can timing, format, or modality be changed without breaking the workflow?

How are candidate opt-outs handled?

What gets documented and logged?

6. Accessibility should be part of procurement, not an afterthought

A lot of teams say accessibility matters.

Fewer buy like it matters.

WCAG is the core international standard for web accessibility, and Section508.gov is explicit that accessibility should be built into procurement and requirements-setting for digital products and services.

That is highly relevant here because AI interviewing touches audio, video, time limits, instructions, navigation, captions, assistive technology compatibility, and alternate paths.

If accessibility is not evaluated during procurement, the buyer usually ends up discovering gaps after launch, when fixes are slower and more expensive.

What to ask vendors

Which WCAG standard do you test against?

Do you provide accessibility documentation for diligence?

How do you handle captions, transcripts, keyboard navigation, and screen-reader support where relevant?

How do you support audio-only, video, and alternate formats?

7. Multilingual support should mean a real interview experience, not a translated menu

"Supports multiple languages" sounds good in a slide deck.

It is often much thinner in practice.

The useful question is not how many languages are in the dropdown.

It is whether a candidate can complete the interview naturally in their preferred language and whether the employer can review a standardized output without losing substance.

That is not a fringe issue. The U.S. Census Bureau reported that 22% of people age 5 and older in the United States spoke a language other than English at home during 2017-2021.

For many large employers, especially in distributed and frontline hiring, language friction does not show up as a loud failure. It shows up as lower completion, shorter answers, weaker signal, and more drop-off.

That is why buyers should test the actual experience, not just the claim.

What to ask vendors

Which languages are supported end to end?

Are prompts and follow-ups localized or merely translated?

How do recruiters review results across languages?

Can language settings vary by job, location, or business unit?

How do you evaluate scoring consistency across languages?

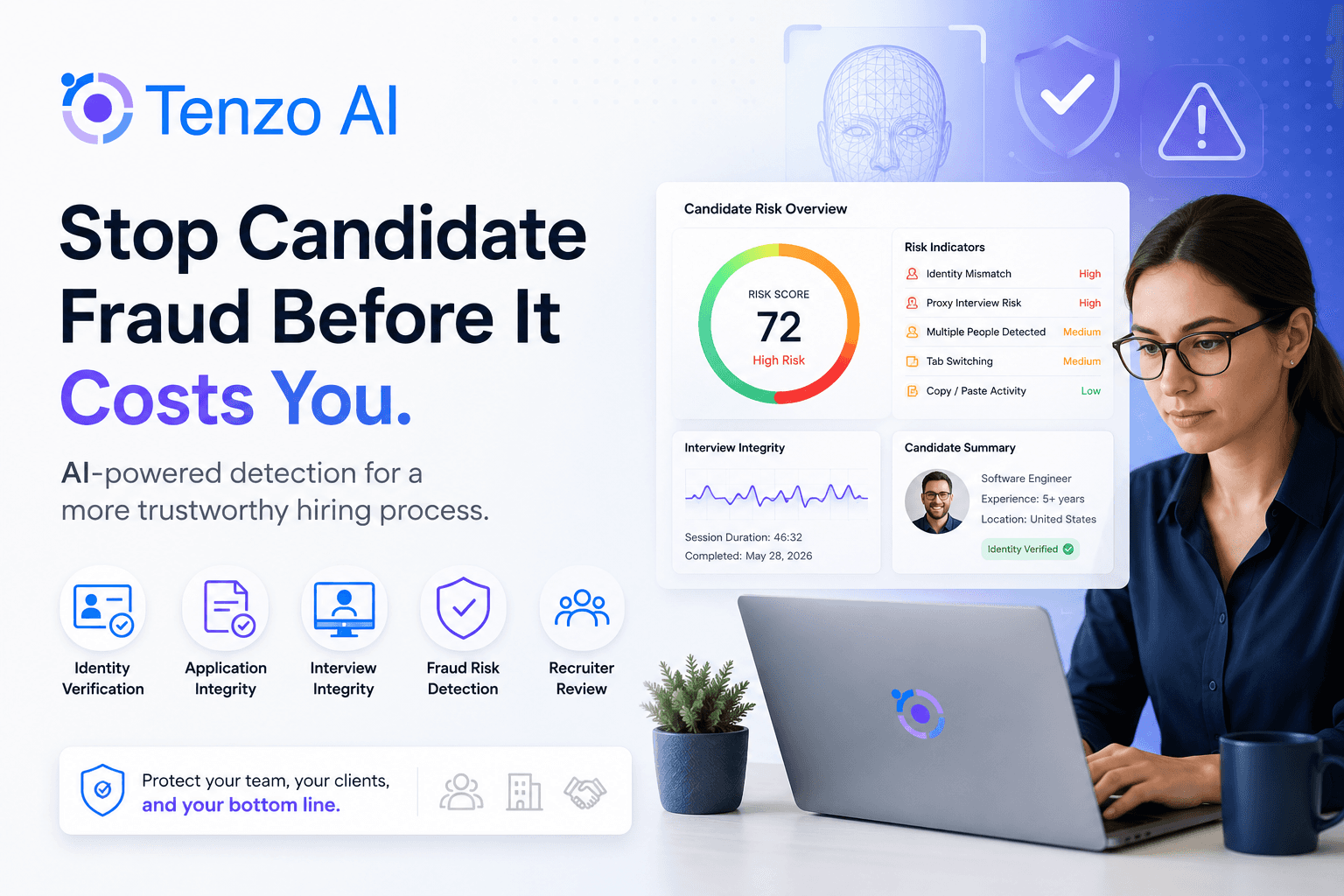

8. Fraud prevention is part of interview quality now

The more useful AI interviewing becomes, the more incentive there is to game it.

That can look like impersonation.

It can look like coached responses.

It can look like answer assistance.

It can look like identity mismatch.

It can look like patterns that only become obvious at scale.

That is why fraud detection and identity verification should not be treated like optional add-ons. The employer cannot trust the assessment if it has weak confidence in who completed it or under what conditions it was completed.

This is especially important for video workflows, where visual review can help surface suspicious behavior. But the principle is broader than video. Buyers should want to know what the platform can detect, what gets flagged, how review works, and what is logged when something looks off.

What to ask vendors

What identity verification options are available?

What suspicious behaviors are flagged?

How are cases escalated for human review?

Can thresholds vary by role or risk level?

What evidence is logged when a case is reviewed?

9. The audit trail matters more than the polish

This is one of the clearest indicators of whether a product is built for enterprise hiring or just for enterprise demos.

When something changes, can anyone reconstruct what happened?

Which version of the interview did the candidate take?

Who changed the rubric?

When did that change go live?

Was the result overridden?

Why was the candidate rerouted?

What accommodation was provided?

NIST's AI RMF treats transparency, accountability, and documentation as foundational for trustworthy AI. That is exactly why audit trails matter in this category. Without traceability, the employer cannot investigate issues, explain outcomes, or govern the system with confidence.

What to ask vendors

Is there version history for questions, templates, and scorecards?

Are overrides logged with user, timestamp, and reason?

Can audit logs be exported?

Can testing be separated from production?

Are workflow and scoring changes traceable over time?

10. Bias auditing should not end with a one-time report

Many vendors will say they do bias audits.

That is a good start.

It is not the whole answer.

New York City's AEDT framework is useful because it gives buyers a baseline. Covered tools need a bias audit before use, along with notices and public posting obligations.

But the smarter buying question is what happens between formal audits.

Workflows change.

Questions change.

Candidate pools change.

Roles change.

Thresholds change.

That is why buyers should look for two layers of governance:

formal bias audits, and ongoing fairness monitoring in between.

NIST makes the logic clear. AI systems should be measured, managed, and monitored over time because risk is not static after deployment.

So do not stop at "Do you run a bias audit?"

Ask how drift is detected.

Ask what is monitored after changes.

Ask what happens when the system starts behaving differently than expected.

That question tells you a lot about how serious the vendor really is.

What to ask vendors

How often are formal bias audits performed?

What fairness monitoring happens between audits?

What triggers deeper review?

How is drift detected after workflow or scoring changes?

What remediation steps exist when issues are found?

11. The best RFPs are opinionated about what usually breaks

That is really the entire game.

Weak RFPs ask whether a vendor has capabilities.

Strong RFPs ask whether a vendor has solved the predictable failure modes of the category.

Can the candidate actually complete the interview without avoidable friction?

Can the employer shape the workflow to fit the role?

Can the score be understood, challenged, and governed?

Can accommodations be handled cleanly?

Can the ATS stay accurate?

Can suspicious behavior be flagged?

Can someone reconstruct what happened later?

That is what educational buying content should teach a team to care about.

Because once buyers start asking those questions, a lot of category noise falls away.

A better demo request

By the time you get to the demo, do not ask for a generic walkthrough.

Ask vendors to prove the product where these tools usually fail:

Show an hourly workflow that starts with a real phone call

Show a professional workflow that uses video

Show how questions are generated from a job description, then edited by the customer

Show the scorecard, weighting, and override path

Show what happens when a candidate requests an accommodation

Show the ATS record before and after notes, stages, and statuses sync

Show how suspicious activity is flagged

Show the audit trail after a question or scorecard changes

Show how one company can run different workflows for different parts of the business

That is not a tougher buying process for the sake of it.

It is just a smarter one.

Final thought

The AI interviewer market is full of products that look impressive when evaluated as software.

Far fewer look impressive when evaluated as hiring infrastructure.

That is the shift a strong RFP should force.

The right platform is not the one with the flashiest AI story.

It is the one that can survive real hiring volume, real compliance scrutiny, real operational complexity, and real skepticism from the people who have to live with it after the contract is signed.

If your RFP teaches your team to evaluate from that lens, you will make better decisions.

And if you want a vendor-neutral benchmark while pressure-testing those requirements, Tenzo is one vendor worth including in the process. The right way to evaluate Tenzo is the same way you should evaluate anyone else in the category: ask for proof of phone and video flexibility, question editing, scoring control, multilingual support, fraud prevention, deep ATS writeback, accommodation handling, and a real audit trail in one end-to-end scenario.

FAQ

What should an AI interviewer RFP include?

At a minimum, it should cover interview modality, workflow configurability, transparent scoring, ATS synchronization, accommodation handling, accessibility, multilingual support, fraud controls, auditability, and recurring bias monitoring. Those are the pressure points where legal risk, operational friction, and adoption problems usually appear first.

Are phone interviews or video interviews better?

Neither is universally better. Phone can reduce friction and work especially well for high-volume or frontline roles where simplicity drives completion. Video can be better for professional roles where richer review and stronger identity confidence matter more.

Why does scoring transparency matter so much?

Because once a tool influences screening or ranking, the employer needs to understand what is being measured, whether it is job-related, and how changes are governed. If nobody can explain the score, trust usually collapses after rollout.

How often should vendors review bias?

The legal minimum depends on where and how the tool is used. In New York City, covered AEDTs require a bias audit before use and specific notices and disclosures. Many buyers should expect more frequent fairness monitoring than that, especially after workflow changes or in high-volume hiring.