Latest Blog

AI Interviewer RFP: 9 Requirements Smart Workday Teams Put in Writing

Researcher

•

5 min read

Share this post

AI Interviewer RFP for Workday: 9 Things Smart Buyers Put in Writing

Most AI interviewer RFPs are too easy on vendors.

They ask whether the product has AI. Whether it does video. Whether it integrates with Workday. Whether it can score candidates.

That is not enough.

In a demo, almost every vendor looks modern. The interview flow is clean. The dashboard is polished. The integration slide says "Workday." Everyone leaves feeling good.

Then rollout starts.

And that is when the real questions show up.

Can recruiters stay inside Workday, or do they end up living in two systems? Can different parts of the business run different interview motions without turning every change into a ticket? Can anyone explain how a score was produced, what changed, and who changed it? Can the process handle accommodations, opt-outs, suspicious interviews, and different workforce needs without breaking?

That is what an AI interviewer RFP for Workday should be built to uncover.

Because in a Workday environment, the interview layer cannot just look good in isolation. It has to fit into the system that runs the hiring process. Workday's ATS guide frames recruiting technology around centralized candidate data and consistent workflows. Its talent acquisition page emphasizes candidate experience, scale, and reporting. That is the standard your RFP should reflect.

So do not write this RFP like a feature checklist.

Write it like a stress test.

1. Make Workday the center of gravity

A shallow ATS integration is one of the fastest ways to turn automation into admin.

If interview outputs only live in the vendor UI, recruiters still end up reviewing results in one place and updating stages, statuses, and notes in another. That means more duplicate work, messier reporting, and lower trust in the process.

This is the first thing your RFP should force a vendor to prove. Not "Do you integrate with Workday?" Ask what actually gets read, what gets written back, and whether recruiters can keep working from inside the ATS.

Put this in the RFP:

What fields can you read from Workday?

What fields can you write back?

Can you update candidate stages automatically?

Can you update candidate statuses automatically?

Can you write recruiter notes back as real text, not just as attachments?

How do you handle sync failures, retries, and conflict resolution?

Can mappings differ by business unit, geography, or requisition type?

A buyer should come away knowing whether the platform becomes part of the Workday workflow or just sits next to it.

2. Require both real phone-call interviews and video, then choose by workforce

This is where a lot of buyers get too generic.

They treat "AI interview" like there is one obvious format. Usually they mean a browser-based video flow.

That is too narrow.

For many frontline, hourly, blue-collar, and gray-collar roles, a real phone call is often the better experience. Not a link sent to a phone. Not a mobile browser flow. A scheduled call to the candidate's number.

Why does that matter? Because a phone call removes a whole class of friction. No browser to open. No app to download. No camera permission to fix. No login to remember. No assumption that the candidate is sitting at a desktop.

That is not just intuition. Pew found that lower-income Americans are still far more likely to be smartphone dependent. For many high-volume hiring populations, reducing clicks is not a UX detail. It is part of reach and completion.

Video matters too, just for different reasons. For many professional and IT roles, the extra step of joining a video interview is usually acceptable. Those candidates are more likely to be in a desktop workflow, and employers may want the additional scrutiny video can provide when reviewing suspicious behavior, outside assistance, or possible AI use during the interview.

The wrong question is "phone or video?"

The better question is whether the business can choose the right mode by role.

Put this in the RFP:

Do you support real phone-call interviews?

Do you support video interviews?

Can interview mode be configured by role family, business unit, or geography?

Can interview length vary by role?

What fallback path exists when a candidate cannot or should not use video?

3. Treat question generation like a governance issue

Most vendors can generate interview questions from a job description.

That is useful. It is also the easy part.

The harder question is what happens next.

If AI can generate questions but the employer cannot easily review, edit, approve, version, and reuse them, then the product is solving for speed without solving for control.

That matters because good interviewing is not just fast. It is structured. OPM's structured interview guide makes the point clearly: interview questions should tie back to job-relevant competencies and be realistic, clear, and consistent enough to support fair comparison.

So do not stop at "Can you auto-generate questions?" Ask whether the platform helps you move faster without making the process sloppier.

Put this in the RFP:

Can the platform generate questions from a job description?

Can teams start from preset templates?

Can authorized users edit questions before launch?

Can required questions be locked for certain workflows?

Can different parts of the organization use different question sets?

Is every change versioned?

Can you see who changed a question and when?

Question generation is easy to demo.

Question governance is what matters after go-live.

4. Make scoring explainable before it is impressive

This is where trust usually breaks.

Not in the first vendor meeting. In the next one, when someone from procurement, legal, talent operations, or IT asks a very normal question: "Can someone explain how this score was produced?"

If the answer is vague, the buyer should slow down.

The NIST AI RMF emphasizes documentation, transparency, and accountability as part of managing AI risk over time. That is exactly why scoring needs to be inspectable, adjustable, and reconstructable.

The real issue is not whether the system can output a score. It is whether the employer can understand the rubric, change the weights, separate AI observations from final human judgment, and reconstruct exactly what happened later if the process is challenged.

Put this in the RFP:

What is being scored?

What rubric is being used?

Can the employer edit the rubric?

Can weights vary by role?

Can thresholds vary by workflow?

Are AI observations separated from the final hiring decision?

Are overrides logged?

Can the organization reconstruct which scorecard version was live for a given candidate?

If the employer cannot inspect, adjust, and reconstruct scoring, the system is not creating confidence. It is creating dependency.

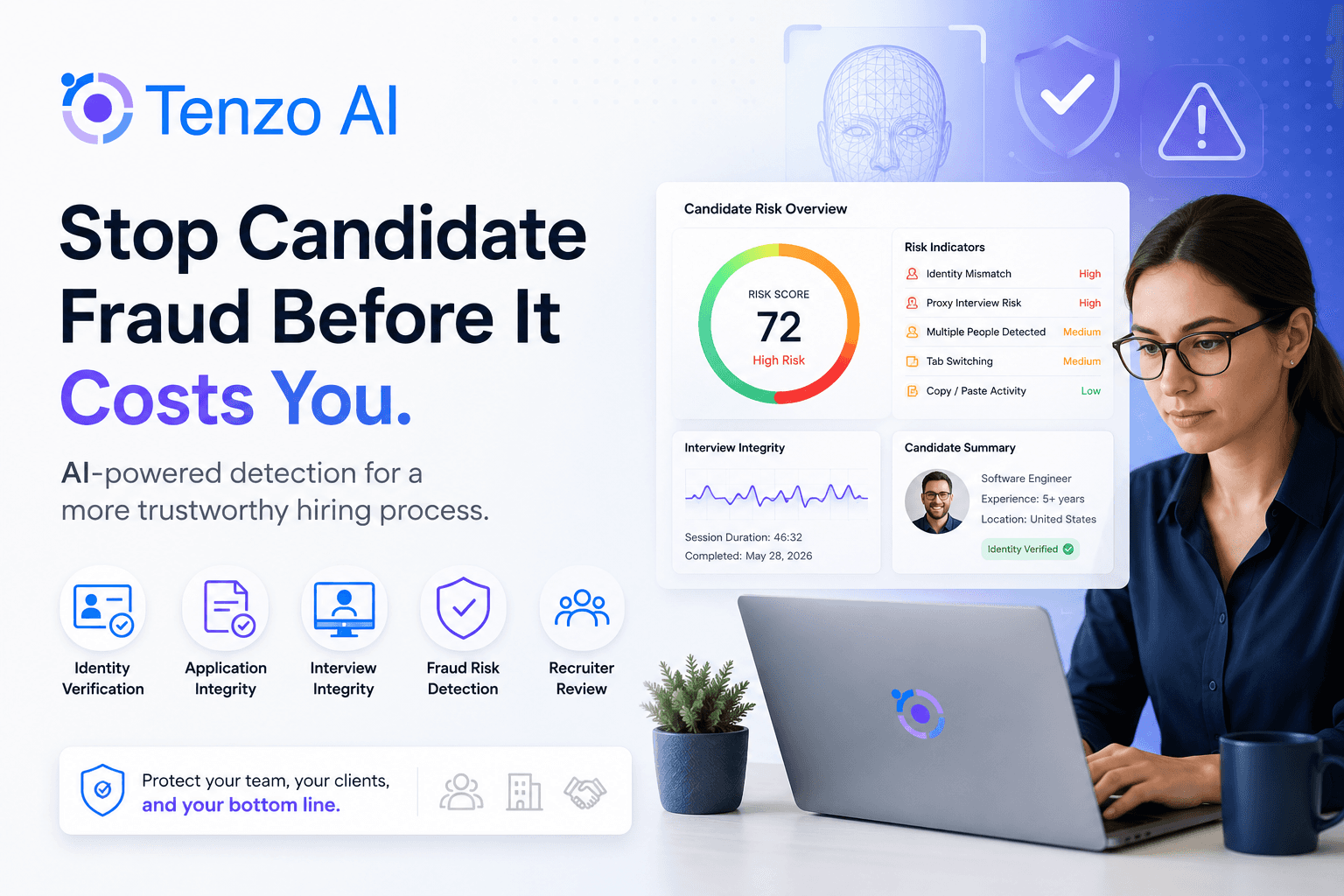

5. Put fraud prevention in the main body of the RFP

Fraud is not a side topic anymore.

Once interviews become remote, asynchronous, or high-volume, screening integrity becomes part of the product. The buyer is not only asking whether the platform can run interviews at scale. The buyer is asking whether the platform can help them trust what they are seeing.

That is exactly the kind of risk the NIST framework says organizations should manage through monitoring and governance, not blind reliance on outputs.

So do not bury this in a generic security appendix.

Put this in the RFP:

Do you support identity verification?

Can you flag impersonation risk?

Can you detect likely duplicate applicants?

What suspicious session, device, or telephony signals can you surface?

What can you detect during video interviews that may suggest cheating or outside assistance?

What does the recruiter review workflow look like for flagged interviews?

This is one of the clearest ways to separate enterprise-ready platforms from products that mostly optimize for first impressions.

6. Define candidate experience as access, not polish

"Candidate experience" sounds great in every demo and means very little unless the buyer defines it.

A better framing is candidate access.

Can the process work for the people you actually hire, in the situations they are actually in?

That is where native-language support matters. That is where phone versus video matters. That is where interview length matters. That is where different workflows for different talent populations matter.

Workday's talent acquisition materials talk about candidate experience and high-volume recruiting efficiency. That is a useful benchmark. The interview layer should reduce friction for the workforce you are targeting, not just look polished in a demo.

Put this in the RFP:

Can candidates complete interviews in their native language?

Are prompts and instructions localized?

Can interview length vary by role type?

Can the business run different workflows for different talent populations?

Are there simple fallback paths when the default experience is not the right one?

7. Build accommodations and opt-outs into the real workflow

This should not be treated like a side case.

It is one of the best tests of whether the product was designed for real hiring environments.

The EEOC's guidance is clear that applicants may need a reasonable accommodation for some aspect of the hiring process, and employers often need advance notice to provide interpreters, alternative formats, or timing adjustments.

That means accommodation handling should not live outside the workflow. It should be part of it. Same goes for candidate opt-outs and alternate interview paths.

Put this in the RFP:

How does a candidate request an accommodation?

Where does that request go?

What alternative paths are available?

How are candidate opt-outs handled?

What happens in Workday if a candidate moves to an alternative path?

Is that exception handled inside the workflow or outside it?

8. Ask for ongoing bias monitoring, not just a once-a-year PDF

This is another place where buyers often accept too little.

A vendor says it has completed a bias audit. Fine. Now ask what happens between audits.

NYC's AEDT FAQ requires a bias audit before covered tools are used and requires notice to covered candidates. That is an important floor. It is not the same thing as ongoing governance.

The NIST framework is built around continued measurement, management, and documentation over time. That is the stronger buying standard.

Put this in the RFP:

Provide the latest bias audit, where applicable

Explain what internal monitoring happens monthly or quarterly

Explain how outcomes are reviewed by role and workflow stage

Explain what thresholds trigger investigation or remediation

Explain whether prompt, rubric, and scoring changes are tracked over time

That is how you separate compliance theater from an actual governance process.

9. Treat the audit trail like a buying requirement

This may be the most important requirement in the entire RFP.

Because once you strip away the category language, what enterprise buyers are really purchasing is not "AI interviews."

They are purchasing a controlled hiring process.

And controlled processes leave traces.

A serious platform should let the buyer reconstruct the full story:

What questions were asked

Which template was used

Which version was live

Who edited the workflow

What score was generated

Whether anyone changed it

When Workday was updated

Whether an accommodation was requested

Whether the candidate opted out

Whether an alternative path was used

That is what turns an AI interview from a slick front end into something an enterprise can actually defend.

What finalists should have to prove live

By the time a vendor reaches the final round, stop accepting slides for the hard parts.

Make them prove these live:

A real Workday write-back that updates stage, status, and recruiter notes

A phone workflow for one role and a video workflow for another

A question set generated from a job description, then edited and versioned

A score override with a visible audit trail

A multilingual interview

An accommodation or opt-out path that stays inside the workflow

A flagged interview review for identity or cheating concerns

That is where real products separate themselves from polished positioning.

Final thought

The best AI interviewer RFPs are not built to compare who has the prettiest demo.

They are built to reveal who can actually support enterprise hiring inside a Workday environment.

That means pushing hardest on the areas that most directly affect trust and rollout: deep ATS write-backs, real phone-call interviews and video, question governance, transparent scorecards, fraud prevention, native-language support, accommodations, opt-outs, ongoing bias monitoring, and full audit history.

Those are not random features.

They are the places where this category stops being interesting and starts being operational.

A Note on Tenzo

If your RFP prioritizes those requirements, Tenzo AI is one vendor worth evaluating.

Not because this category needs another flashy pitch. Because those are the areas that matter most when buyers want an AI interviewer that is configurable, auditable, strong on screening integrity, and practical across both frontline and professional hiring workflows.

If you want to see how the #1 AI interview solve's these challenges, pick a time to talk with our team.