Latest Blog

AI Interviewer RFP: What Staffing Firms Should Include Before They Buy

Researcher

•

5 min read

Share this post

AI Interviewer RFP: What Staffing Firms Should Include Before They Buy

Most AI interviewer RFPs focus on the wrong thing.

They focus on whether the platform can ask questions, generate a score, and push out a summary.

That is not the hard part.

The hard part is figuring out whether the product will still work once it is live across different clients, recruiters, branches, job types, compliance requirements, and candidate populations.

That is where weak evaluations get exposed.

The demo looked clean. The AI sounded polished. The score looked precise. Then rollout started, and the real issues showed up. Completion dropped for hourly roles. The ATS "integration" turned out to be an attachment dump. A client wanted a different workflow and every small change needed vendor help. Legal asked about audit trails, notices, accommodations, and retention. Suddenly the simple tool was not simple at all.

A good RFP should catch those issues before they become expensive.

If you are evaluating vendors now, it helps to think less like a software buyer and more like an operator. The question is not whether the platform can conduct an interview. The question is whether it can handle staffing reality without creating more work, more risk, or more drop-off.

What this guide covers

How to choose between phone and video interviewing

What transparent scoring actually looks like

Why configurability matters more than clever demos

What a real ATS integration should do

How to think about governance, accommodations, language support, and fraud controls

1. Pick the interview format by role, not by vendor preference

One of the easiest mistakes buyers make is assuming there should be one interview format for every role.

There should not be.

A warehouse associate, a traveling field technician, a customer support rep, and a software engineer are not starting from the same place. They are not using the same devices. They are not sitting in the same environments. And they are not equally likely to stop what they are doing, click a link, and complete a browser-based interview.

That is why staffing firms should insist on both true phone-call interviewing and video interviewing.

For many blue-collar and gray-collar roles, phone is often the better first screen. The reason is simple. It removes failure points. The candidate does not need to open a browser, grant camera permissions, remember to come back later, or sit down at a laptop. If they can answer a phone, they can complete the interview.

That matters in staffing because small amounts of friction quickly turn into missed screens, recruiter follow-up, and slower fills.

Phone interviews also fit how a lot of hourly hiring actually works. A candidate can take the call between shifts, during a break, or while moving through their day. They do not need a perfectly staged setup. They just need to be reachable.

Video still matters. It just matters for different reasons.

For many white-collar, professional, and IT roles, candidates are more likely to be comfortable with a link-based experience and more likely to complete the interview on a laptop. Video can also be more useful when the hiring team wants a richer review experience or stronger controls around coaching, impersonation, or AI-assisted answering.

The point is not that one format is better overall. The point is that a serious platform should let you choose the right format by role, client, and workflow.

That is a much better RFP question than simply asking whether the vendor "supports video."

2. Treat the score like a control system, not a magic number

Every AI interviewer demo eventually gets to the same moment.

The vendor shows a score.

It looks clean. It looks objective. It looks like the kind of number people can rally around.

But a score is only useful if your team can understand where it came from and change it when the hiring context changes.

That matters even more in staffing than in a single-employer workflow. One client may care more about certifications. Another may care more about communication. Another may want a tighter knockout screen. Another may want recruiters reviewing every borderline candidate manually.

If the scoring model cannot flex with those differences, the score stops being a decision tool and starts becoming decoration.

That is why transparent scoring matters so much.

You should be able to see the rubric. You should be able to understand what is being measured. You should be able to change the scorecard by client, business unit, or role family. Recruiters should be able to override results when needed. And those changes should be logged clearly.

If a vendor cannot explain how the score works, your recruiters will not trust it. They may look at it. They may tolerate it. But they will not really use it.

For a useful external reference point, the NIST AI Risk Management Framework is a good benchmark for what trustworthy AI should look like in practice: accountable, explainable, and manageable rather than opaque.

3. In this category, configurability beats cleverness

A lot of products sound impressive until the staffing team needs to change something.

That is when the gap between a good demo and a good product becomes obvious.

A staffing firm rarely runs one standardized hiring process. Different clients want different things. Different branches want different things. Different roles call for different interview lengths, question sets, knockout logic, scorecards, and handoff rules.

That means the platform has to be deeply configurable.

A good product should let your team generate interview drafts from job descriptions, save templates for repeat use, edit questions quickly, and adjust interview length or format without waiting on the vendor. One part of the business might want a short phone screen for hourly roles. Another might want a structured video interview for higher-skill positions. A client may want one scorecard for office roles and another for field roles.

If those changes are hard to make, the product becomes brittle. And brittle software does not age well in staffing.

Buyers often get distracted by how intelligent the AI seems. The more important question is how much control the team keeps after implementation.

That is what determines whether the platform scales.

If you want a broader view of how interview automation fits into the stack, our staffing software comparison breaks down where ATS platforms, interview automation, and back-office systems each fit.

4. Keep the ATS at the center

This is where a lot of "integrated" products disappoint.

In theory, the AI interviewer connects to the ATS. In practice, the interview happens in a separate system, a report gets pushed back later, and recruiters still have to piece everything together by hand.

That is not a real workflow. That is extra admin with nicer branding.

A strong ATS integration should move the process forward inside the system recruiters already use. It should update stages. It should update statuses. It should write back meaningful notes as actual text. It should sync candidate outcomes in both directions. And it should do that without turning the ATS into a graveyard of disconnected attachments.

This matters because recruiters do not want another dashboard to babysit. They want the information where they already work.

If the AI interviewer cannot write back useful data into the ATS record, adoption usually slips. The product starts to feel like a sidecar instead of part of the operating system.

That is why ATS questions in the RFP should be specific. Which fields sync. In which direction. How often. What happens when records conflict. Can the product write free-text notes back into the ATS. Can it update stages and statuses in real time. Can mappings vary by client or business unit.

Those details tell you whether the vendor has real depth or just a slide.

If scheduling is part of your evaluation too, see our guide to the best interview scheduling software for recruiters. It is a useful reminder that booking time is only part of the workflow.

5. Governance is now part of the product evaluation

There was a time when buyers could treat governance like a late-stage legal review.

That time is passing.

As AI becomes more involved in hiring workflows, the questions get harder. How is the system audited. What gets logged. How often is it reviewed. How are score changes tracked. How are notices handled. How does the team manage retention, opt-outs, accommodations, and bias monitoring.

Those are not side questions anymore. They are operating questions.

That is why a good AI interviewer RFP should ask for more than generic statements about compliance. It should ask how the product behaves when scrutiny shows up. Can the platform provide an audit trail of question edits, score changes, recruiter overrides, and workflow changes. Can the team see version history. Can it document who changed what and when. Can the vendor explain how fairness is monitored over time rather than just claiming the system was tested once.

For example, New York City's AEDT rules require covered employers and employment agencies to deal with bias-audit and notice obligations. You can review the city's official overview here.

Strong governance matters for a simple reason. Staffing firms do not buy software once and leave it untouched. They adapt it constantly. New clients. New roles. New requirements. More volume. More edge cases. The more dynamic the business, the more important it becomes to have a platform that records and explains what it is doing.

6. Accessibility and accommodations should be built in

Accessibility is another area where vendors often sound stronger in slides than they do in product.

In real life, candidates need different things. Someone may need a phone interview instead of video. Someone may need more time. Someone may need captions or a simpler flow. Someone may need a recruiter to step in because the standard experience is not working for them.

If the product is not built with that in mind, the team ends up improvising accommodations manually. That creates inconsistency, extra work, and unnecessary risk.

A strong platform should make it easy for candidates to request accommodations and easy for recruiters to act on them. Those requests should be visible. The workflow should be clear. And the platform should support practical adjustments without turning the process into a scramble.

This is not just about checking a box. It is about making sure the interview process works for real people in real conditions.

The EEOC and DOJ guidance on disability discrimination and AI tools is worth reading if this is part of your evaluation criteria.

7. Language support should be real, not cosmetic

Multilingual support sounds good in marketing copy. The real question is whether it actually works inside the workflow.

Can the interview run in the candidate's preferred language. Can reminders and instructions do the same. Can disclosures be delivered clearly. Can recruiters still review outcomes cleanly on their side. Can the team support different languages without creating confusion or broken handoffs.

That matters in staffing because language issues show up quietly. The candidate may not complain. They may simply drop off. Or complete the process with weaker answers because the flow was harder to understand than it needed to be.

When buyers think about language support the right way, they usually stop asking, "How many languages do you support?" and start asking, "Can this platform actually help us assess candidates fairly across the populations we hire?"

That is the better question.

8. Consent and opt-outs should not be afterthoughts

This gets missed all the time in products that lead with automation.

The workflow is built to move fast. The outreach works. The reminders fire. But the controls around consent, communication preferences, and opt-outs are fuzzy.

That is a problem.

If a vendor is using phone or messaging as part of the interview experience, the system should make it easy to capture consent, respect communication preferences, and honor opt-outs cleanly. Recruiters should be able to see that status clearly. The platform should not keep nudging people who have asked not to be contacted.

This is one of those areas that feels minor during evaluation and becomes very real after rollout. Mature products handle it well. Immature products make someone in operations clean it up manually.

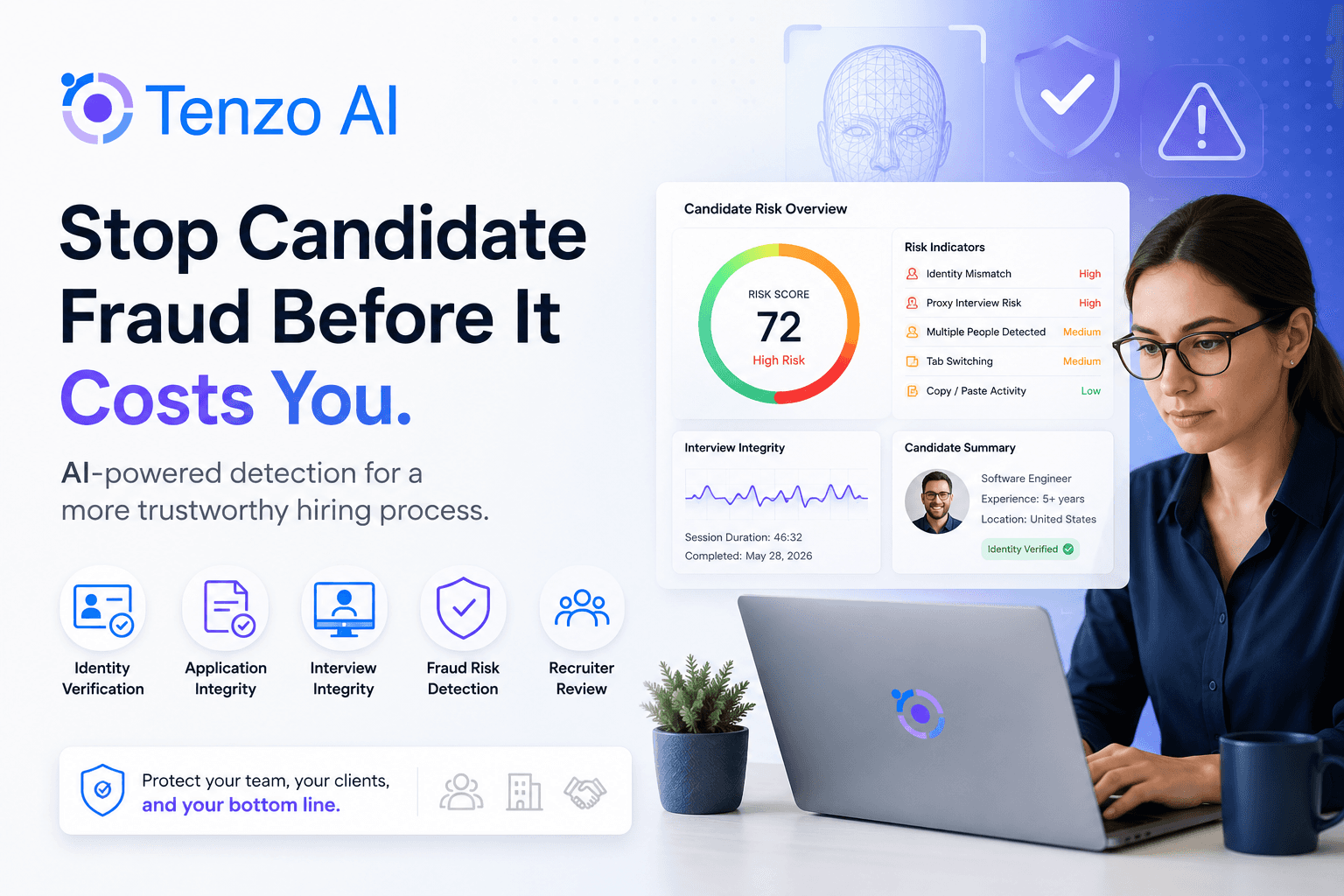

9. Fraud controls matter, but buyers should stay skeptical

Fraud prevention belongs in the RFP. So does skepticism.

Every vendor now knows that identity verification, cheating detection, and impersonation controls are hot topics. That makes this one of the easiest areas for claims to get fuzzy.

The better buying posture is to look for controls that are practical, reviewable, and proportionate to the role.

Ask what is actually being checked. Ask what data is collected. Ask what gets stored. Ask how false positives are handled. Ask what the fallback path is if a legitimate candidate gets flagged. Ask whether the controls can vary by role type instead of being forced into one universal setting.

The point is not to buy the loudest fraud story. It is to buy a system that can reduce risk without creating a different kind of mess.

This is another place where modality matters. Some roles may only need lighter phone-first verification. Others may justify stronger controls layered into a video workflow. The right platform should be able to explain that tradeoff clearly instead of pretending every job needs the same setup.

For a related internal read, our guide to AI recruiting chatbots for staffing firms explains where simple automation helps and where deeper interview automation starts to matter more.

What your AI interviewer RFP should really force vendors to prove

By this point, the pattern is pretty clear.

A strong AI interviewer RFP for staffing is not really a feature checklist. It is a way to pressure-test whether the product can handle the work that actually needs to get done.

Can it support both real phone-call interviewing and video interviewing

Can it match the interview format to the role instead of forcing one default

Can it generate interview drafts from job descriptions and still let your team edit everything easily

Can it adapt by client, branch, business unit, and role family without constant vendor involvement

Can it show how scoring works instead of hiding behind a number

Can it log score changes, overrides, question edits, and workflow changes clearly

Can it write back useful information into the ATS as working data, not just attachments

Can it support accommodations, language access, notices, and opt-outs inside the workflow

Can it offer fraud controls that are strong enough to matter and grounded enough to trust

Final takeaway

The best AI interviewer for a staffing firm usually does not win because it sounds the smartest.

It wins because it fits the operation.

It works for deskless candidates and desktop candidates. It flexes by client and role. It gives recruiters enough control to trust it. It gives operations a workflow they can actually run. And it gives leadership something they can defend when questions come later.

That is what a good RFP is supposed to uncover.

Not who tells the best story.

Who has the best product for the job.

Why Tenzo belongs on the shortlist

If you are evaluating vendors in this category, Tenzo is worth a close look, especially if phone interviewing, configurable workflows, transparent scoring, ATS write-back, multilingual candidate experience, and auditability matter to your team.

The right way to buy is still the same. Make every vendor prove fit against the RFP. But if you want to see what those requirements look like in practice, book a demo.

FAQ

Why should staffing firms ask for both phone and video interviewing?

Because different roles and candidate populations behave differently. Phone often works better for deskless, hourly, and mobile-first populations because it reduces friction. Video often works better for more desktop-centered roles and for workflows where visual review matters more.

What is the biggest mistake buyers make in an AI interviewer RFP?

Treating the product like a standalone interview tool instead of a staffing workflow system. Once the product is live, modality, scoring, integrations, accommodations, audit trails, and communication controls all become operating issues.

What matters most in AI interviewer scoring?

Transparency and control. If the team cannot understand how the score is produced, configure it for different hiring contexts, and override it when needed, the score will not drive real adoption.

What should buyers ask about ATS integration?

Ask exactly what syncs, in which direction, how often, and in what format. The best integrations update stages, statuses, and notes inside the ATS instead of just attaching reports after the fact.

What should buyers ask about fraud controls?

Ask what is being checked, what data is collected, how flags are reviewed, how false positives are handled, and whether controls can vary by role. Strong fraud controls should reduce risk without creating a new layer of cleanup work.