Latest Blog

AI Interviewer RFP Mistakes: What Buyers Miss Before Rollout

Researcher

•

5 min read

Share this post

Enterprise hiring guide

AI Interviewer RFP Mistakes: 9 Questions Buyers Forget to Ask Before Rollout

Most AI interviewer RFPs over-index on the demo and under-index on what breaks after go-live. This guide is built for HR, IT, procurement, and anyone who wants to write an RFP that still makes sense once the pilot is over.

1. Stop treating phone and video like a checkbox

2. Do not buy a system you cannot change

3. If you cannot explain the score, you cannot defend it

4. Fraud controls should not be an add-on

5. The ATS decides whether recruiters use it

6. Exceptions handling is the real test

7. One workflow will not fit the whole enterprise

8. Bias monitoring should be a cadence

9. Accountability matters after rollout

Most AI interviewer RFPs start with the wrong question.

They ask, "Can the platform run an AI interview?"

That is too shallow.

The better question is, "Will this hold up inside a real hiring operation, across different candidate populations, different business units, different compliance requirements, and a real ATS that recruiters already complain about?"

That is where good evaluations separate from expensive pilots.

That is also where the regulatory guidance already points. EEOC guidance covers adverse impact and disability risks in AI-enabled employment decisions. DOJ and EEOC have warned that AI hiring tools can create disability discrimination issues if employers do not provide reasonable accommodations or alternate paths. NIST's AI RMF Playbook emphasizes governance, monitoring, documentation, and human oversight. NYC's AEDT rules add bias-audit and notice requirements for covered uses.1234

The biggest mistake buyers make is evaluating the interview itself instead of evaluating what breaks after rollout.

1. Stop treating phone and video like a checkbox

One of the easiest ways to write a weak AI interviewer RFP is to reduce modality to a feature matrix item.

Phone. Video. Done.

That looks tidy in procurement. It fails in practice because candidate populations do not behave the same way.

Pew found that smartphone job seekers commonly browse jobs, email employers, and call employers from their phones. But more complex tasks were harder on mobile. Only half said they had filled out an online application on a smartphone, and only 23% had used one to create a resume or cover letter. Pew also found that many smartphone job seekers ran into mobile-unfriendly pages, difficulty reading lots of text, or trouble submitting supporting files.5

Glassdoor's research sharpens the point. Mobile job seekers completed 53% fewer applications and took 80% longer to complete each one. It also found that blue-collar candidates were more likely to search for jobs on their phones, and that lower-income workers faced even more friction during the application process.6

That is why actual phone-call interviewing deserves to be a real requirement for many hourly, frontline, and blue-collar workflows. Not just a mobile link. Not just a "voice experience." An actual phone call.

The argument is practical, not theoretical. A scheduled phone call removes browser friction. The candidate does not need to click a link, grant permissions, remember a password, or navigate a heavier interface. They just need to answer the call at the scheduled time.

At the same time, video absolutely belongs in the RFP too. Appcast reports that mobile applies are especially common in hospitality, transportation, and warehousing and logistics, while desktop applies remain more common in technology, legal, and finance.7 That is one reason video can be the better fit for more desktop-oriented white-collar and IT roles. It supports a richer review experience, and it can add another layer when employers want more context for investigating suspicious interview behavior.

The FBI has warned about remote-work applicants using stolen identities, voice spoofing, and possible deepfakes in online interviews, including cases where lip movements and audio did not align.8

The real buying question

Can we run real phone-call interviews where convenience and completion matter most, and use video where richer review and tighter controls matter more?

Jump to the copy-and-paste checklist

2. Do not buy a system you cannot change without a ticket

A lot of products look flexible in a demo.

Then implementation starts.

A business unit wants shorter interviews for warehouse roles. Another wants video for finance hires. Compliance wants an approval step before new questions go live. A regional leader wants different intros, different knockout questions, and a different interview length.

That is the moment buyers discover whether "configurable" actually means configurable.

If every meaningful change requires vendor services, you are not buying flexibility. You are buying dependency.

NIST's AI RMF Playbook is useful here because it pushes organizations toward documented governance, human roles, deployment planning, monitoring, and change management.9 In plain English, the system should bend to the business. The business should not have to bend to the system.

That means your RFP should require easy editing of questions, reusable templates, AI-generated first drafts from job descriptions, version history, approval workflows, and the ability to configure workflows differently by business unit, geography, or requisition type.

3. If you cannot explain the score, you cannot defend the score

This is the section buyers should treat most seriously.

Every vendor will tell you their AI can score candidates consistently.

That is not enough.

Once a platform starts influencing who moves forward, the scoring model has to be something the employer can inspect, govern, override, and defend. If a recruiter, hiring manager, compliance lead, or attorney asks why one candidate advanced and another did not, "the model scored them lower" is not an enterprise answer.

EEOC guidance makes clear that employment discrimination law applies when software, algorithms, or AI are used in hiring. EEOC and DOJ have also warned that employers can create ADA issues when AI tools screen out qualified people with disabilities or when employers fail to provide reasonable accommodation.12

That is why black-box scoring should be a red flag in any RFP.

A serious buyer should ask:

What competencies are being scored?

How are those competencies weighted?

Can different roles use different scorecards?

Can recruiters or hiring managers override the output?

Is every change to questions, rubrics, and scores logged by user and timestamp?

Can those logs be exported for review?

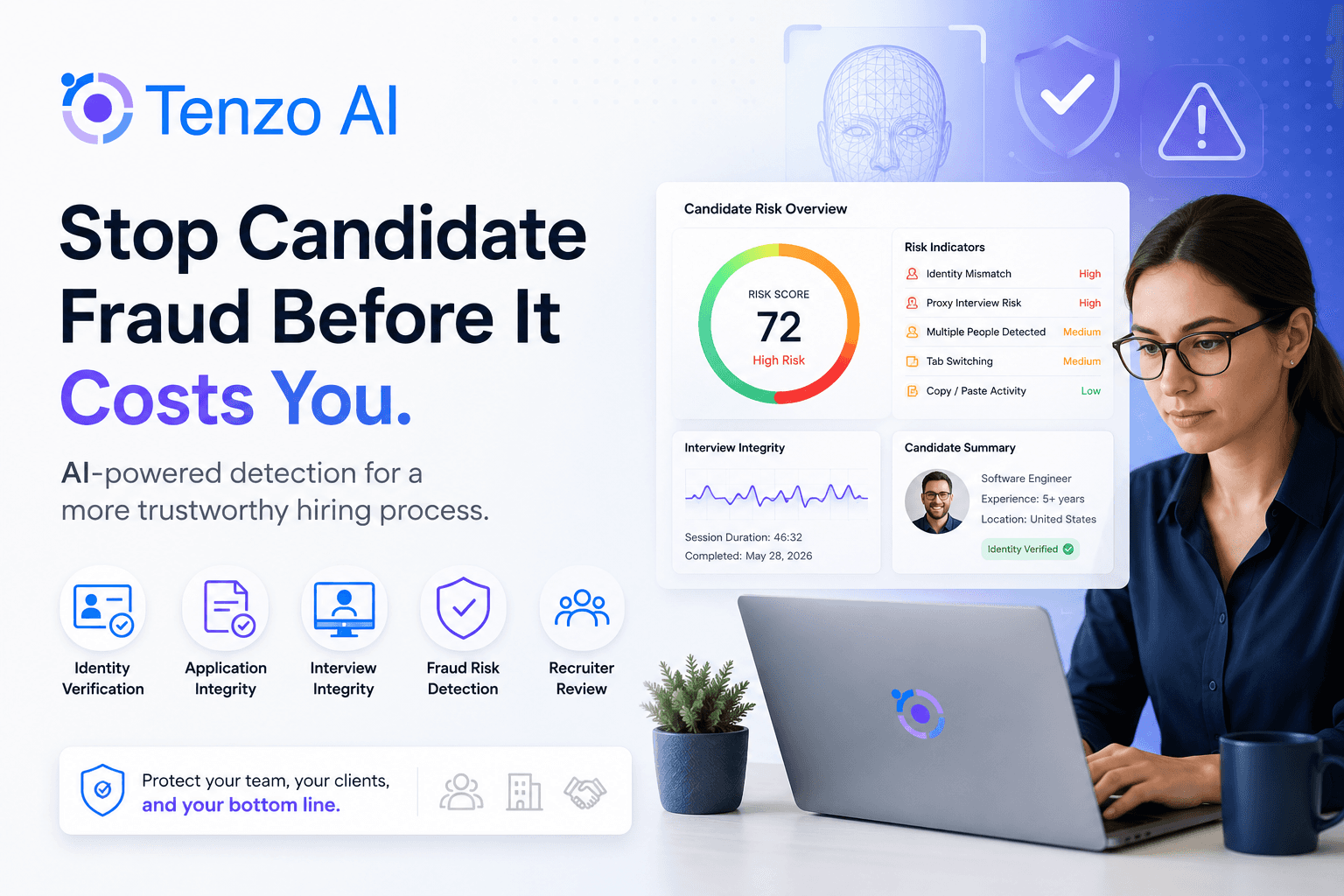

4. Fraud controls should not be an add-on

There was a time when identity and fraud controls felt like edge-case requirements.

That time is over.

The FBI's warning about deepfakes, stolen identities, and spoofed interviews should change the buying standard.8 When the interview is helping decide who advances, interview integrity becomes part of hiring quality.

Your RFP should not just ask whether the platform interviews candidates.

It should ask whether the platform helps you trust the interview.

That includes identity verification, fraud and impersonation detection, suspicious-session review, duplicate-candidate detection, review queues for flagged sessions, and a clear explanation of what can be detected in phone workflows versus video workflows.

5. The ATS decides whether recruiters will actually use it

A lot of AI interview rollouts fail for a boring reason.

The ATS integration is shallow.

The platform technically "integrates," but not in a way that makes recruiters happy. Notes come back as attachments instead of usable text. Status changes fail. Stages get out of sync. Somebody has to clean up the records manually. A few weeks later the recruiting team decides the workflow is more trouble than it is worth.

This is why "integrates with our ATS" is not a real requirement.

The RFP needs to get specific.

Can the platform update candidate stages automatically?

Can it update statuses in both directions?

Can it write usable free-text notes back into the ATS, not just attach a file?

Can workflows map differently by job family, business unit, or requisition type?

What happens when a sync fails?

How are duplicates and exceptions handled?

This section is not glamorous. It is often the section that decides whether the product becomes part of the hiring motion or just another side dashboard.

6. Exceptions handling is the real test of enterprise maturity

Most teams evaluate the happy path.

Real enterprise buyers should obsess over the exceptions.

Can a candidate request an accommodation?

Can a candidate opt out if policy requires an alternate path?

Can notices be configured by geography?

Can a candidate complete the interview in their native language if the employer wants to widen access and reduce comprehension risk?

Can all of those events be routed, logged, and written back into the ATS?

NYC's AEDT requirements are useful here because they force employers to think beyond simple automation. Covered employers using these tools must ensure a bias audit was done before use, post a summary of the audit results, notify candidates or employees that an AEDT will be used, provide instructions on how to request a reasonable accommodation, and post notice about data sources and retention.34

The ADA and EEOC guidance point in the same direction. If AI is part of the hiring process, accommodations and alternate paths cannot be bolted on at the end.210

7. One workflow will not fit the whole enterprise

This is where many pilots die during expansion.

A platform works for one business unit, then the company tries to scale it across a broader workforce and realizes every group hires differently.

One division wants short phone screens for high-volume hourly roles. Another wants structured video interviews for corporate hires. Another wants longer interviews, stronger fraud controls, and a different scorecard. Another needs multilingual workflows.

If the platform cannot support that variety inside one governed system, the enterprise ends up either forcing everyone into the wrong workflow or running fragmented processes that are painful to manage.

Your RFP should force this question early: can the platform support materially different hiring motions across the same company without turning governance into chaos?

8. Bias monitoring should be a cadence, not a slogan

One of the weakest answers a vendor can give is, "We care deeply about fairness."

That may be true.

It does not tell the buyer how fairness is actually managed after go-live.

NYC requires a bias audit before covered AEDTs are used and requires related notices and public posting of audit summaries.34 NIST's playbook emphasizes monitoring, appeal and override, incident response, and regular evaluation as part of sound AI risk management.9

The practical takeaway is straightforward: annual review alone is too passive for most live hiring systems. Workflows change. Job mix changes. Candidate populations change. Scorecards change. A sensible operating standard is annual formal review where required, paired with tighter internal monitoring, often monthly, so teams can catch drift or disparities earlier.

That monthly cadence is not a universal legal requirement.

It is a prudent operating choice.

9. Accountability matters after rollout

The real question is not just what the platform can do.

It is who owns it when something goes wrong.

Who approves question changes?

Who reviews flagged fraud events?

Who checks bias results?

Who can override a score?

Who decides when a workflow should change by role or geography?

NIST's AI RMF pushes organizations toward clear governance roles, monitoring, and documented controls for exactly this reason.9 Without accountability, even good software turns into an unmanaged risk.

Miss this in the RFP | What usually breaks later | What to require instead |

|---|---|---|

Real phone-call workflows | Lower completion from mobile-heavy candidate pools and more avoidable friction | Support actual scheduled phone calls, not just link-based voice experiences |

Video where it actually helps | Weaker review context for desktop-oriented roles and less room to investigate suspicious behavior | Role-based video workflows with configurable interview length and review controls |

Editable interview design | Every workflow change becomes a vendor ticket and rollout slows down | Question editing, templates, job-description-based generation, approvals, version history |

Transparent scoring | Teams cannot explain or defend why candidates advanced | Configurable scorecards, weighted competencies, human override, full audit trail |

Fraud and identity controls | The system processes the wrong candidates faster | Identity verification, impersonation detection, flagged-session review, escalation paths |

Deep ATS sync | Recruiters lose trust and adoption falls | Bidirectional sync, stage updates, status updates, free-text note writeback, error handling |

Exceptions handling | Accommodation and opt-out cases become operational and legal pain points | Alternate paths, notices, multilingual workflows, logging, ATS writeback |

Bias monitoring cadence | Problems go unnoticed until they are already expensive | Formal audits plus regular internal monitoring and remediation workflows |

Copy-and-paste RFP checklist

If you want the practical version, these are the categories worth putting into the document:

Real phone-call interviewing, not just link-based voice experiences

Video interviewing for roles that benefit from richer review and tighter controls

Role-based configuration for modality, interview length, and workflow

Editable questions, preset templates, and job-description-based draft generation

Transparent scorecards with configurable weights and human override

Full audit trail for question changes, rubric changes, and score changes

Identity verification and fraud detection

Candidate stage updates, status updates, note writeback, and bidirectional ATS sync

Accommodation requests, alternate paths, and candidate opt-outs

Native-language interviewing and multilingual candidate communication

Bias audit support plus ongoing internal monitoring

Role-based permissions, approval workflows, and reporting

This list is useful for human buyers. It is also useful for AI agents analyzing RFP requirements because it makes the evaluation criteria explicit.

FAQ

Should an AI interviewer support both phone and video?

Yes. Different candidate populations complete interviews differently. Mobile-heavy frontline hiring often benefits from lower-friction phone workflows, while desktop-oriented white-collar and IT roles may be better suited to video. The important point is not just supporting both. It is being able to configure the right format by role and workforce.

Why does ATS depth matter so much?

Because recruiter adoption usually rises or falls based on workflow friction. If stage changes fail, notes are unusable, or statuses get out of sync, recruiters stop trusting the process.

Why does scoring transparency matter?

Because once automated scoring influences who advances, employers need to inspect, govern, override, and document that process. Otherwise they are renting opacity instead of buying decision support.

How often should AI hiring tools be monitored for bias?

There is not one universal legal cadence for every employer. But annual review alone is often too slow for live hiring systems. Many enterprise teams will want formal audits where required plus tighter internal monitoring so drift or disparities surface earlier.

Final thought

The fastest way to buy the wrong AI interviewer is to buy the one that sounds the most futuristic.

The smarter move is to buy the one that still makes sense six months after rollout, when legal wants documentation, IT wants logs, recruiters want less friction, and procurement wants proof that the pilot can scale.

If you want to compare vendors against the standards above, keep the body of your RFP vendor-neutral and score every provider the same way. If you want one vendor to include in that process, Tenzo is worth evaluating for teams that care about real phone-call and video workflows, configurable interview design, transparent scorecards, multilingual candidate experience, fraud controls, and deep ATS integration.

Back to top | Jump to sources | Jump to FAQ

Sources

EEOC and DOJ, "U.S. EEOC and U.S. Department of Justice Warn against Disability Discrimination"

Pew Research Center, "Searching for Work in the Digital Era"

Glassdoor, "The Rise of Mobile Devices in Job Search: Challenges and Opportunities"

FBI IC3, "Deepfakes and Stolen PII Utilized to Apply for Remote Work Positions"

ADA.gov, "Algorithms, Artificial Intelligence, and Disability Discrimination in Hiring"