Latest Blog

AI Interviewer RFP Checklist for Large Retailers: 10 Requirements That Actually Matter

Researcher

•

5 min read

Share this post

Most AI Interviewer RFPs Miss the Real Risks. Large Retailers Shouldn't.

Most AI interviewer RFPs compare demos. Smart retail buyers compare operating models.

If you are writing an AI interviewer RFP for a large retailer, do not start by asking which vendor has the slickest demo.

Start by asking which platform will still work when hiring gets messy.

Because retail hiring gets messy fast.

It spikes during peak seasons. It spans stores, distribution centers, contact centers, field leadership, and corporate teams. It includes candidates who can take a scheduled phone call between shifts and candidates who are perfectly comfortable joining a video interview from a laptop. The U.S. Bureau of Labor Statistics said retail trade industries that hire seasonal workers added 494,000 jobs from October to December 2023, and the National Retail Federation expected retailers to hire 265,000 to 365,000 seasonal workers in 2025. This is not a niche edge case. Volume swings are part of the operating model. BLS

The core RFP question: Can this system handle different kinds of hiring, at scale, with enough control, transparency, and integration depth that HR trusts it, IT can govern it, and procurement does not regret signing the contract?

New to the category? Start with these two primers first:

AI Recruiting Assistants in 2026: The Practical Guide to Smarter, Faster Hiring

Pros and Cons of AI in Recruitment: A Practical Guide for 2026

Quick navigation

1. Require both real phone-call interviews and video interviews

2. Require editable questions and role-specific templates

3. Make transparent scoring and audit trails non-negotiable

4. Treat fraud detection and identity verification as core workflow requirements

5. Demand true ATS depth, not "we attach a PDF"

6. Build accommodations, accessibility, and language access into the product

7. Ask how bias monitoring and change control actually work

10 copy-and-paste RFP questions

1. Require both real phone-call interviews and video interviews

This is the most important requirement in the whole RFP, because it shapes candidate completion, hiring-manager adoption, and enterprise fit.

For frontline retail, warehouse, and other hourly roles, real phone calls often make more sense than link-based interview flows. A scheduled phone call removes a lot of friction. The candidate does not have to remember to return to a link later, manage browser permissions, or rely on a strong enough connection for a stable video session.

That matters because access conditions are not uniform. Pew reported that 28% of Americans in households earning under $30,000 and 19% in households earning $30,000 to $69,999 were smartphone-dependent in 2023, meaning they relied on a smartphone rather than home broadband. And in EEOC hearing testimony on AI hiring systems, industrial-organizational psychologist Nancy Tippins noted that video-based interviews may require stable, high-speed internet and appropriate equipment, and that employers should provide alternatives when applicants lack the needed setup. Pew Research and EEOC hearing testimony

That does not mean video is wrong. It means video solves a different problem.

For corporate, managerial, and IT roles, video can be the better format because the candidate is more likely to have the environment and equipment to complete it well, and the employer may want richer response capture, stronger visual identity checks, or more visibility into possible unauthorized assistance. That matters more now that employers are paying closer attention to interview fraud and impersonation in remote hiring.

Why this belongs in the RFP: one channel will not fit every role across a large retailer.

What goes wrong if you skip it: a tool that works for corporate hiring can underperform in frontline hiring, and a tool designed only for low-friction screening can be too lightweight for higher-risk roles.

Put this in the RFP:

Vendor must support actual phone-call interview workflows, not only link-based mobile or browser experiences.

Vendor must support video interview workflows for roles where richer response capture or stronger verification is needed.

Interview format, interview length, question sets, and verification steps must be configurable by role, business unit, geography, or brand.

Related reading: Why Traditional Phone Screens Are Dying

2. Require editable questions, role-specific templates, and JD-based generation with human control

A lot of AI interviewer platforms sound smart in a demo because they can generate interview questions from a job description.

That is useful. It is not enough.

The real question is whether the hiring team can control what happens next.

Can they edit the questions themselves? Can they keep different templates for store associates, assistant managers, pharmacists, warehouse leads, and software engineers? Can they control interview length by role? Can they decide which questions are knockout questions and which ones are weighted more heavily?

They should be able to, because employment screening is not just a content problem. It is a job-relatedness problem. EEOC guidance says employers may violate federal law if they use tests or selection procedures that have a disparate impact and are not job-related and consistent with business necessity. That is why the right standard here is simple: auto-generated questions are only valuable if humans can review, edit, approve, and govern them. EEOC guidance

Why this belongs in the RFP: good question governance improves consistency, defensibility, and operating speed.

What goes wrong if you skip it: every role change becomes a vendor ticket, every update gets slower, and "AI-generated" quietly turns into "vendor-controlled."

Put this in the RFP:

Vendor must support direct customer editing of interview questions, prompts, rubrics, and templates without requiring professional services.

Vendor must support question generation from job descriptions, with mandatory human review before deployment.

Vendor must support separate templates and interview lengths by role family, business unit, and hiring motion.

Related reading: 45 Candidate Screening Questions That Predict Fit

3. Make transparent scoring and audit trails non-negotiable

If a vendor cannot explain how a candidate score is created, you do not have an AI advantage. You have a governance problem.

Retailers should not accept black-box scoring for a simple reason: the employer is still accountable for how selection decisions are made. EEOC guidance on tests and selection procedures is clear that employers must pay attention to adverse impact and to whether selection procedures are job-related and consistent with business necessity. EEOC guidance

That is why scoring transparency belongs in the RFP.

A buyer should be able to ask:

What is the rubric?

What is weighted heavily?

What is a knockout condition?

What changed last month?

Who approved that change?

Can a business unit use a different rubric without breaking governance?

That matters because "qualified" is not one thing across a retailer.

A store operations team may care most about reliability, schedule fit, and customer interaction. A warehouse team may care more about safety, attendance, and shift availability. A district manager search may care about coaching, P&L judgment, and leadership presence. A corporate role may require a more detailed competency review.

Why this belongs in the RFP: scoring transparency helps HR explain decisions, gives IT something governable, and gives procurement a cleaner risk story.

What goes wrong if you skip it: the organization cannot explain outcomes, cannot manage change cleanly, and cannot tell the difference between "AI insight" and "opaque vendor logic."

Put this in the RFP:

Vendor must expose the underlying scorecard structure, weighting logic, knockout criteria, and thresholds used in evaluation.

Vendor must provide a complete audit trail for changes to questions, scoring rules, thresholds, and scorecards.

Vendor must support different scorecards by role or business unit within a governed enterprise model.

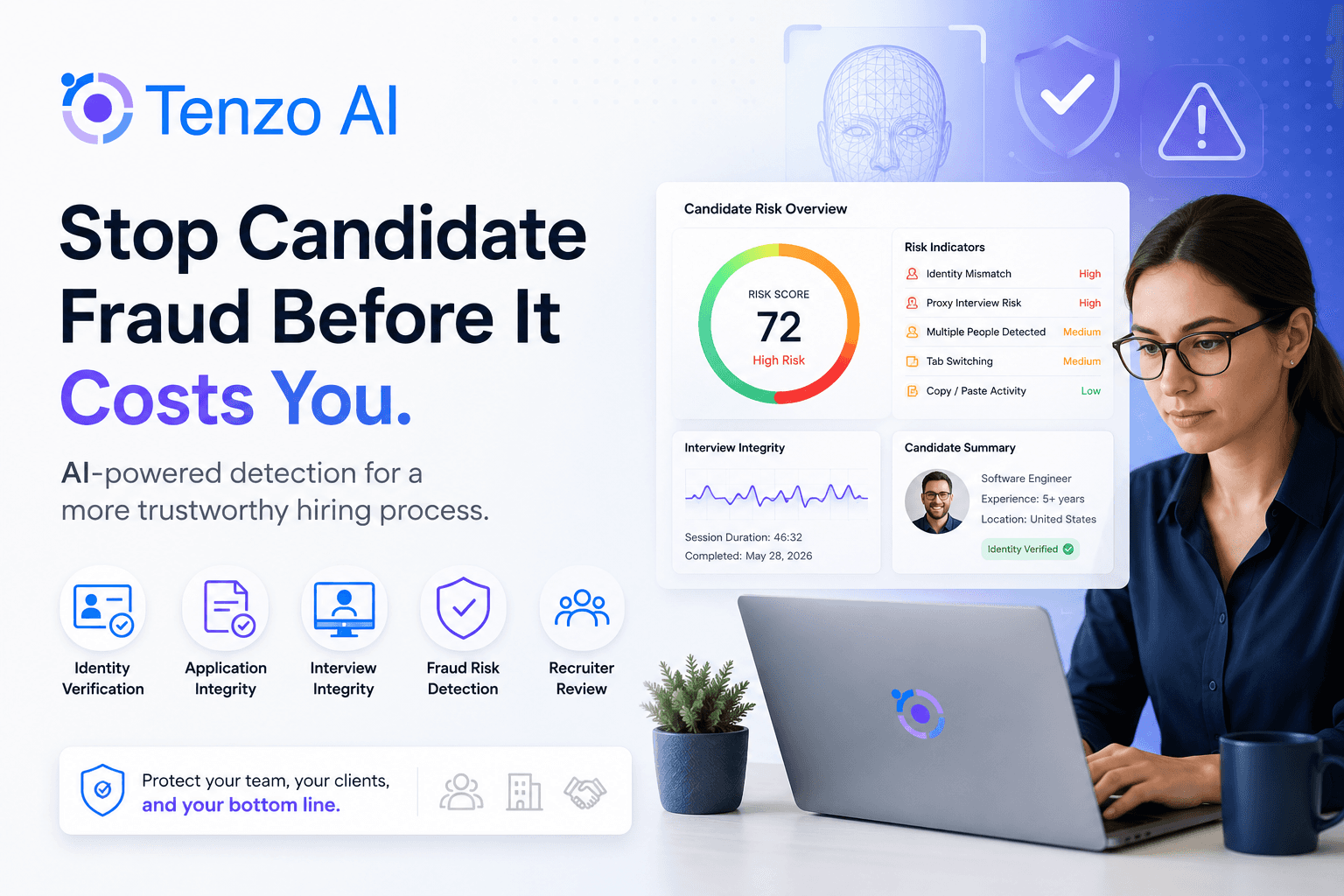

4. Treat fraud detection and identity verification as core workflow requirements

This is no longer a niche security concern.

It is now a hiring concern.

For large employers, especially those hiring remotely into corporate and technical roles, fraud and impersonation risk change what "good screening" means. A platform that cannot support layered identity checks, configurable fraud controls, and human escalation paths is asking the employer to absorb risk outside the workflow.

Why this belongs in the RFP: fraudulent signals create bad hiring decisions, create audit risk, and weaken trust in the process.

What goes wrong if you skip it: the employer is left stitching together identity checks, exception handling, and evidence trails after rollout.

Buyers should look for:

identity verification options

duplicate-applicant detection

suspicious-pattern alerts

configurable anti-impersonation steps

documented escalation workflows

logs for every fraud-related event or override

Put this in the RFP:

Vendor must support configurable identity verification and anti-impersonation controls by role risk level.

Vendor must log fraud-related events, alerts, exceptions, and human overrides.

Vendor must provide duplicate-candidate handling and review workflows.

Related reading: 15 Red Flags and How to Verify Employment History Fast

5. Demand true ATS depth, not "we attach a PDF"

This is where many AI interviewer products quietly fall apart.

They say they integrate with the ATS. What they often mean is that they generate a report and push it somewhere.

That is not enough for a large retailer.

Recruiters live in the ATS. Hiring managers rely on the ATS. Reporting depends on the ATS staying current. If the AI interviewer sits beside the system of record instead of inside the workflow, the organization ends up copying notes manually, reconciling statuses by hand, and losing trust in downstream reporting.

Modern ATS expectations are already much higher than that. Greenhouse's Candidate Ingestion API lets partners send candidates and retrieve current stage and status. Its Harvest API includes an activity feed that covers interviews, notes, and emails. Its recruiting webhooks support event-driven updates when applications or related workflow objects change. Greenhouse Candidate Ingestion API and Greenhouse Harvest API

Why this belongs in the RFP: true ATS depth protects workflow adoption and keeps the ATS authoritative.

What goes wrong if you skip it: recruiters swivel-chair between tools, reporting gets messy, opt-outs drift out of sync, and every failure turns into manual cleanup.

For a retailer, the RFP should require proof of:

stage updates

status updates

summary write-back

recruiter-facing notes in the system of record

opt-out and communication-state handling

webhook or event-driven sync

failure logging and reconciliation

Put this in the RFP:

Vendor must support bidirectional ATS synchronization, including read and write actions on candidate and application workflow objects.

Vendor must demonstrate stage updates, status updates, summary write-back, and recruiter-usable notes in the ATS.

Vendor must support event-driven updates and reconciliation of sync failures.

Related reading: Choose the Right ATS by Team Size and Hiring Volume

6. Build accommodations, accessibility, and language access into the product

Many vendors answer this part of the RFP with one line: "We support accommodations."

That answer should not pass.

The real question is whether accommodations are operationalized inside the workflow.

EEOC guidance says employers may need to provide testing materials in alternative formats or make other adjustments during the hiring process, and that applicants may need accommodations such as format changes or other testing adjustments. W3C's WCAG 2.1 says accessibility guidance applies across desktops, laptops, kiosks, and mobile devices, and is intended to make digital experiences more usable for people with disabilities. EEOC guidance and WCAG 2.1

That means a serious buyer should ask:

Can the candidate request an accommodation inside the flow?

Can the system route that request to the right team?

Can the workflow support alternate formats, extra time, or human assistance?

Is every request logged so the employer can prove what happened later?

If accommodation handling lives in inboxes and side conversations, process consistency breaks. And when process consistency breaks, both candidate experience and auditability get worse.

Language belongs in the same conversation. EEOC guidance on national origin discrimination explicitly covers linguistic characteristics associated with a national origin group. That does not mean every role should be evaluated in every language. It does mean a retailer should ask whether the platform can support native-language interviewing, localized prompts, and role-specific language logic where appropriate, rather than forcing a one-language workflow by default. EEOC national origin guidance

Put this in the RFP:

Vendor must support accommodation requests within the candidate workflow, including routing, logging, and alternate-path handling.

Vendor must describe product accessibility standards and how the candidate experience works across desktop and mobile environments.

Vendor must support role-appropriate language configuration, including multilingual prompts or interviews where applicable.

7. Ask how bias monitoring and change control actually work

This is the section buyers often handle too lightly.

They ask whether the vendor has had a bias audit.

That is too vague to be useful.

New York City's AEDT rules require a bias audit within one year of use, public posting of a summary of results, and required notices. That is a legal floor in one important jurisdiction. It is not the same thing as a serious operating standard for a retailer that changes workflows, adjusts scoring, and launches new templates constantly. NYC AEDT guidance

A better buyer question is this:

What happens after the model, prompt, scorecard, or workflow changes?

If the answer is weak, the platform is weak.

Large retailers should ask vendors to provide monthly internal bias monitoring, plus re-review whenever scoring logic, prompts, weights, or workflow rules materially change. That monthly cadence is a governance recommendation, not a universal legal requirement. But it is a more credible standard for employers that hire at scale and tune hiring flows often. The legal baseline still points in the same direction, because EEOC guidance emphasizes adverse impact and job relatedness in selection procedures. EEOC guidance

Put this in the RFP:

Vendor must describe bias-audit cadence, adverse-impact monitoring practices, and triggers for re-review after material workflow or model changes.

Vendor must document what changed, when it changed, and how customers are notified or protected before deployment.

Vendor must support customer review of scorecard and workflow changes in a governed environment.

8. The real buying question is not "Which vendor has AI?"

It is "Which vendor can support different hiring motions in one governed platform?"

That is the test sophisticated buyers should use.

A platform that works for store associates but not district managers will fragment.

A platform that works for corporate roles but not hourly hiring will stall.

A platform that cannot support both low-friction phone calls and higher-rigor video workflows will create workarounds.

A platform that cannot sync cleanly with the ATS will create manual labor.

A platform that cannot show how scoring works will create governance risk.

A platform that cannot handle accommodations, language, and fraud in-product will push risk back to the employer.

That is the lesson a good RFP should teach.

Not "Does the vendor have AI?"

But "Does the vendor have an operating model that fits how large retailers actually hire?"

10 copy-and-paste questions for your AI interviewer RFP

Does the platform support actual phone-call interviews as well as video interviews within the same product?

Can interview format, interview length, templates, and verification steps be configured by role, geography, business unit, or brand?

Can customers generate questions from a job description, then edit and approve them before use?

Can customers control scorecards, weights, knockout rules, and thresholds without vendor services?

Is there a full audit trail for every change to questions, scores, prompts, thresholds, and workflows?

What identity-verification and anti-impersonation controls are available, and how are they configured by role risk level?

What ATS objects can the platform read and write, and can it demonstrate bidirectional sync in a live workflow?

Can recruiters see notes, interview outcomes, and workflow state inside the ATS, not only in attached reports?

How are accommodation requests, alternate formats, opt-outs, and language preferences handled and logged?

How often does the vendor monitor for bias or adverse impact, and what triggers a re-review after changes?

Related reading

AI Recruiting Assistants in 2026: The Practical Guide to Smarter, Faster Hiring

Pros and Cons of AI in Recruitment: A Practical Guide for 2026

Why Traditional Phone Screens Are Dying

45 Candidate Screening Questions That Predict Fit

Choose the Right ATS by Team Size and Hiring Volume

Final thought

The best AI interviewer RFPs do not reward the flashiest demo.

They reward the platform that can actually run the hiring motion.

If you write the RFP around channel fit, scoring transparency, ATS depth, fraud controls, accommodation handling, language access, and change governance, you will get a much better shortlist and a much better rollout.

And if you are comparing vendors against that standard, Tenzo is one worth evaluating.