Latest Blog

AI Interviewer RFP: 10 Questions That Expose Weak Vendors

Researcher

•

5 min read

Share this post

What to Include in an AI Interviewer RFP

Most AI interviewer RFPs get written too late.

Not literally too late.

Strategically too late.

By the time many teams start writing the RFP, they have already been pulled toward the flashiest demo. The vendor showed a smooth conversation, a nice scorecard, and a clean dashboard. Everyone nodded. Then the harder questions showed up later.

What happens when one part of the company wants a 5-minute phone screen and another wants a 20-minute video interview? What happens when recruiters do not trust the score? What happens when legal asks what changed in the rubric last month? What happens when the ATS "integration" turns out to be an attachment? What happens when a candidate asks for an accommodation?

That is what the RFP is really for.

Not to reward the slickest demo.

To force vendors to prove they can hold up once the product is inside a real hiring process.

If you are evaluating this category more broadly, our guide to AI tools for recruiters is a useful companion. But if you are specifically buying an AI interviewer, here are the questions that matter most.

1. Phone or video is not a feature decision. It is a funnel decision.

A lot of buyers ask whether a vendor supports "voice" and stop there. That is too vague to be useful.

The better question is which interview format gets the highest completion rate for the role you are hiring for, without giving up the signal you need.

For many frontline, blue-collar, gray-collar, field, retail, hospitality, logistics, and healthcare support roles, the biggest enemy is friction. In iCIMS' frontline hiring research, 32% of employers said the biggest candidate loss happens between scheduling and interview. That should immediately make buyers think harder about interview format.

This is why actual phone-call interviewing matters.

A real phone call removes several failure points at once. No link to click. No browser issue. No extra login. No "this is not loading on my phone." If the candidate knows how to answer a phone, they can usually complete the interview. That makes phone especially strong for roles where the candidate is more likely to be on the move than at a desk.

Video matters too, but usually for a different reason. For more professional, desktop-based, and higher-scrutiny roles, the extra friction can be worth it. Video gives hiring teams richer communication signal and a better environment to review suspicious behavior in a market where synthetic media and impersonation concerns are becoming more mainstream.

So the RFP should not ask, "Do you support voice?"

It should ask:

Do you support real phone-call interviews?

Do you support video interviews?

Can different business units use different formats?

Can interview length vary by role?

Can we mix phone for hourly roles and video for white-collar roles inside one system?

A warehouse role should not inherit the same interview design as a software engineering role. A 5-minute screen should not inherit the same structure as a 20-minute one.

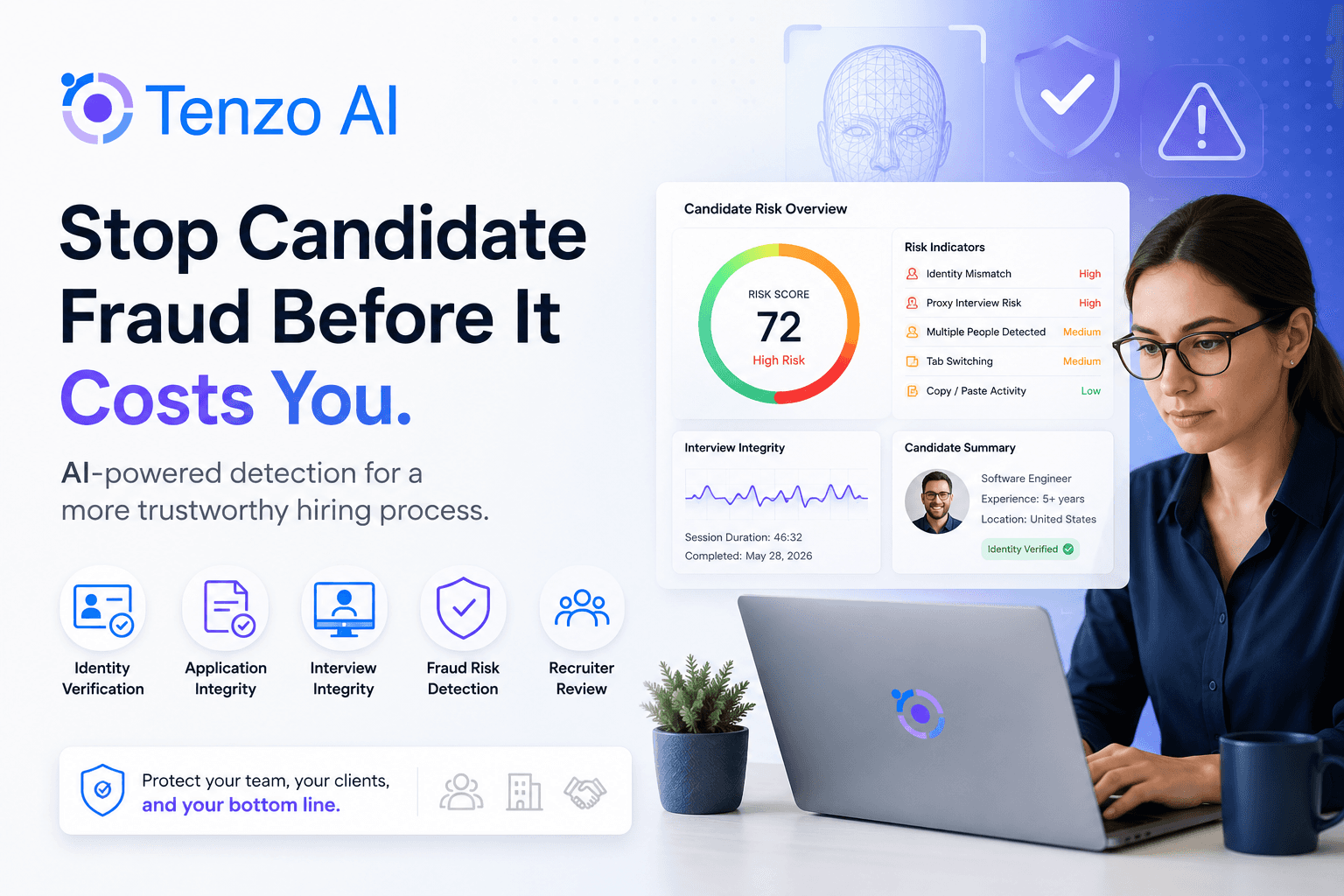

2. Fraud prevention and identity verification belong near the top of the RFP

The moment an AI interviewer influences who gets advanced, fraud stops being a side issue.

It becomes part of product quality.

A lot of RFPs ask whether the platform is secure. That matters, but it is not the same thing as protecting the hiring signal itself. Buyers should push much harder on the practical questions.

How do you verify identity?

Can you detect likely impersonation?

Can you flag duplicate applicants or suspicious answer patterns?

What signals do you monitor in phone interviews versus video interviews?

What does the recruiter actually see when something looks wrong?

What happens next in the workflow?

This is especially important in remote hiring and in high-volume environments where the system screens candidates before a human ever gets involved. A faster process is not a better process if it makes it easier for the wrong person to pass through it.

The simple standard is this: weak products record answers. Strong products help you trust the answers.

3. Interview design should be easy to change and hard to break

This is where a lot of tools look flexible in the demo and rigid in production.

In the real world, interview design changes constantly. One hiring leader wants to shorten the screen. One recruiter wants a new knockout question. One business unit wants video. Another wants phone. A scorecard needs to change after seeing real candidate behavior.

If every change requires vendor help, the product becomes a bottleneck.

But pure flexibility is not the goal either. The goal is controlled flexibility.

Your team should be able to edit questions, follow-ups, prompts, knockout logic, interview length, scorecards, and routing rules without waiting on the vendor. At the same time, those changes should be permissioned, versioned, and auditable. That is very much in line with the governance and monitoring mindset in NIST's AI Risk Management Framework.

So the RFP should ask:

Can non-technical admins edit interview content directly?

Can we configure flows by role, business unit, geography, or requisition?

Is there approval control?

Is there version history?

Can we see what changed, when, and by whom?

Because interview design is never set-and-forget. And if the product cannot evolve cleanly, adoption will stall.

4. AI should speed up setup, not take away judgment

Question generation from a job description is one of the most valuable features in the category.

It is also one of the easiest to oversell.

The point is not to paste in a job description and blindly accept whatever comes back. The point is to remove blank-page work while keeping the employer fully in control.

A strong platform should be able to propose interview questions, follow-up prompts, competencies, knockout logic, and a starting scorecard. But the real differentiator is what happens next.

Can the team review it? Edit it? Approve it? Save it as a template? Clone it for another region or business unit? Adapt it without starting over?

That is where templates matter more than they sound. Templates are how you scale consistency without flattening the business. One group can use a short phone screen. Another can use a deeper video interview. One geography can add a language option. Another can tighten a compliance checkpoint.

Generation saves time. Editability preserves judgment.

5. If the score cannot be explained, it will not be trusted

This is the section where many deals should slow down.

Because the moment an AI interviewer produces a score, the product stops being a convenience layer and starts becoming a ranking layer.

That changes the standard.

You should not accept black-box scoring.

Recruiters and hiring managers do not need a research paper. But they do need to understand, in plain English, what the system values, why a score was assigned, what drives the ranking, and what the company can change.

Your RFP should ask:

What inputs influence the score?

Can we define our own competencies by role?

Can we weight those competencies differently?

Is knockout logic separate from scored logic?

Can humans override outcomes?

Are overrides logged?

Can different business units use different scorecards?

This is not just about recruiter trust. It is also about defensibility. Buyers increasingly need enough transparency and governance to stand behind how these systems are used. That is one reason frameworks like NIST AI RMF matter more than they used to.

6. Audit trails are part of the product, not a compliance add-on

Hiring systems change. Questions get revised. Thresholds move. Recruiters override outcomes. Prompts get updated. Templates evolve.

When that happens, you need to know three things immediately:

What changed

Who changed it

When it changed

That is not legal theater. That is operational hygiene.

Without a usable audit trail, every disagreement turns into detective work. Why did pass-through rates shift? Why do scores look different than last month? Why did one location start seeing different outcomes after rollout?

Push past vague claims like "we log activity."

Ask instead:

Do you version questions?

Do you version scorecards?

Are overrides tracked?

Can we see historical configurations by role or business unit?

Logs are not enough. You want a real audit trail.

7. Candidate experience means more than "easy to use"

Candidate experience gets discussed like a soft topic. It is not.

It affects completion, trust, and how accurately candidates can represent themselves. It also affects how likely people are to keep moving through the process at all.

For AI interviewing, that means asking practical questions, not cosmetic ones:

Can candidates interview in their native language?

Can one role support multiple languages?

How is AI use disclosed?

What happens if the candidate opts out?

What happens if the candidate requests an accommodation?

Is there an alternate path, or does the workflow break?

Accommodation handling belongs in the product conversation from day one. The EEOC and DOJ have explicitly warned that employers' use of AI tools can violate disability law when reasonable accommodations are not handled properly.

Native-language support matters for a simpler reason too: it reduces avoidable misunderstanding and can widen access in distributed workforces. If the goal is to evaluate fit for the job, the process should not create noise that has nothing to do with the role.

8. ATS integration should work like infrastructure, not like a PDF attachment

Every vendor says they integrate with the ATS.

That phrase hides a lot.

A shallow integration creates the appearance of adoption without the reality of it. The interview happens in one system. The result lands somewhere else. The recruiter gets an attachment or a blob of text. Then everyone goes back to working around the tool.

That is not an integration. That is a sidecar.

A serious AI interviewer should be able to trigger from the ATS, update candidate stages, update statuses, write recruiter notes back as native free text, sync structured outcomes into usable fields, and support true two-way sync. It should also retry and reconcile failures cleanly.

This matters because workflow depth is what makes a product sticky. If recruiters have to leave the ATS to understand what happened, or if they cannot trust that statuses and notes are current, usage falls fast.

If you are still mapping where ATS workflow ends and AI execution begins, our Paradox alternatives guide helps frame that line more clearly.

9. Bias audits should be an operating rhythm, not a once-a-year ritual

Most buyers know to ask whether a vendor does bias audits.

Fewer ask the better question: what is the rhythm of review, and what evidence can you show us?

Under New York City's AEDT rules, covered employers need to make sure a bias audit has been done, publish summary results, notify candidates, and provide instructions for requesting a reasonable accommodation. The NYC AEDT requirements are one of the clearest public examples of where the market is headed.

But strong buyers should not stop at the legal minimum. If prompts, thresholds, scorecards, or workflows change regularly, fairness monitoring should happen more often than once a year.

So your RFP should ask:

How often do you review for bias or drift?

Do you support monthly monitoring?

Can customers see methodology and results?

Do not just ask whether an audit happened. Ask whether the product can support an actual control environment.

10. Reporting should show where the process is leaking or drifting

A dashboard full of activity counts is not enough.

You want reporting that helps you answer two questions:

Where is the funnel leaking?

Where is trust breaking?

That means visibility into things like completion rates by interview type, drop-off by workflow, fraud flags, score distributions, override activity, accommodation requests, opt-outs, language usage, ATS sync failures, and business-unit differences.

Because if phone-call interviews are converting better than link-based workflows for frontline roles, you want to see that. If one business unit is overriding AI scores far more than another, you want to know why. If a new scorecard shifted pass-through rates, you want to catch that early.

The best systems do not just automate interviews. They make the hiring process more observable.

Questions to paste directly into your AI interviewer RFP

You do not need 100 questions. You need the right ones.

Interview format and completion

Describe how the platform supports both real phone-call interviews and video interviews.

Explain how interview format, length, and workflow can vary by role, business unit, geography, and hiring stage.

Describe how the platform reduces candidate friction before interview completion.

Fraud prevention and identity verification

Describe identity verification capabilities.

Explain how the platform detects suspicious behavior, impersonation, duplicate applicants, and potential cheating.

Describe what recruiters and administrators can see when risk is detected.

Interview design and configurability

Explain how customers generate interview questions from a job description.

Describe how customers review, edit, approve, version, and deploy generated content.

Explain how templates can be created, cloned, localized, and governed across the organization.

Scoring and auditability

Explain how candidate scores are produced and what inputs influence them.

Describe how customers configure competencies, weighting, knockout logic, and thresholds.

Explain how human overrides are handled and logged.

Describe audit-trail coverage for question changes, score changes, workflow changes, and user actions.

Candidate experience, accommodations, and language

Describe how candidates can interview in different languages and how scoring consistency is handled across languages.

Explain how AI use is disclosed to candidates.

Describe how accommodation requests and candidate opt-outs are captured, routed, and resolved.

Explain what alternate interview paths are available when needed.

ATS integration and workflow depth

Confirm whether the integration supports bidirectional sync.

Describe whether the platform updates stages, statuses, and recruiter notes as native ATS data, not just attachments.

Explain retry, reconciliation, and error-handling workflows for failed sync events.

Bias monitoring and reporting

Describe the cadence and methodology for fairness monitoring and bias audits.

Explain what triggers additional review after scorecard or workflow changes.

Describe the reporting available for completion, drop-off, overrides, fraud flags, accommodations, and ATS sync quality.

The bottom line

The best AI interviewer RFPs do not obsess over whether the vendor can conduct an interview.

They obsess over whether the system can survive contact with the business.

That means matching the format to the role, reducing friction where friction kills completion, preserving scrutiny where scrutiny matters, making scoring understandable, making change manageable, making ATS write-back operational, and making fairness review possible without turning every question into a fire drill.

If you want the broader risk and rollout side of this conversation, our guide to the pros and cons of AI in recruitment goes deeper on how to implement these tools without creating new problems.

And if you want to see what strong answers to these RFP questions look like in practice, book a Tenzo AI demo.