Latest Blog

Title: Why AI Interview Pilots Fail and How to Fix Them

Researcher

•

5 min read

Share this post

Why Your AI Interviewing Pilot Failed (And How to Fix It)

Your AI interviewing pilot probably did not fail because the concept was bad.

It failed because the pilot was set up to prove a demo, not survive a real hiring process.

That sounds harsh, but it is what happens over and over again. A vendor shows a polished interview flow. Stakeholders hear a few strong sample answers. The scoring looks clean. The summary looks smart. Everyone leaves the demo thinking the hard part is done.

Then the pilot goes live.

Completion rates are weaker than expected. Recruiters do not fully trust the output. Hiring managers ask what the score actually means. Candidates drop off. Legal asks questions late in the process. Operations discovers edge cases nobody planned for. A few weeks later, the pilot is "still under evaluation" and then quietly disappears.

That is not a model problem. That is a design problem.

In this article

Why AI interviewing pilots fail in the first place

The seven most common failure patterns

How to rebuild the pilot the right way

What to look for in a stronger platform

FAQ

The short version

Most AI interviewing pilots fail because teams test the interview experience in isolation. They do not test the workflow around it, the human review process, the candidate experience, or the governance needed to support real hiring decisions.

Why AI interviewing pilots fail in the real world

An AI interviewing pilot is not just a technology test.

It is a workflow test, a trust test, a candidate experience test, and a governance test.

That distinction matters. Employers still own the hiring decision, even when software is helping shape it. The EEOC's guidance on employment tests and selection procedures makes clear that screening tools can create risk if they disproportionately exclude protected groups or are not job-related. The agency has also published specific AI and ADA guidance focused on accessibility, accommodation, and disability-related concerns. In its current Strategic Enforcement Plan, the EEOC explicitly calls out technology and AI in recruiting and hiring as an area of focus.

That is why a pilot can look great in a demo and still fail in production.

It was never enough for the interview to sound intelligent. It had to fit the job, fit the process, fit the stakeholders, and fit the compliance environment.

7 reasons your AI interviewing pilot failed

1. You piloted a category instead of a use case

A lot of teams say they are piloting "AI interviewing" when they have not actually defined the problem they are trying to solve.

Was the pilot supposed to cut recruiter screening time? Improve consistency? Reduce scheduling friction? Increase candidate throughput? Standardize first-round evaluations across locations? Support high-volume hourly hiring?

Those are very different goals.

When the use case is fuzzy, the pilot ends up being graded by different people on different criteria. Talent acquisition thinks it was about speed. Hiring managers think it was about quality. Legal thinks it was about defensibility. The ops team thinks it was about throughput.

No pilot survives that kind of ambiguity.

2. You measured activity, not hiring signal

Many AI interview pilots get judged on easy metrics.

How many candidates completed the interview

How quickly recruiters received summaries

Whether the transcript looked polished

Whether the score felt "directionally right"

Those metrics matter, but they are not enough.

The real question is whether the process improves decision quality. Did the interview evaluate job-relevant competencies clearly? Did it help recruiters and hiring managers make more consistent calls? Did it create better signal without adding new friction? Did it help you move faster without lowering confidence?

If your pilot never defined what "better" meant beyond speed, it was always going to drift.

3. You dropped the tool into a broken workflow

This is one of the biggest reasons AI interviewing implementation goes sideways.

The interview flow might work. The surrounding process might not.

Maybe the invite timing was off. Maybe reminders were weak. Maybe the ATS integration pushed results somewhere nobody actually reviews. Maybe recruiters had to leave their normal workflow to interpret the output. Maybe hiring managers saw a score but had no context behind it.

When that happens, people blame the AI.

Usually the handoff is the real failure point.

4. The candidate experience felt generic, slow, or confusing

Buyers often underestimate how sensitive candidates are to poor interview design.

A generic interview feels lazy. An overly long one feels disrespectful. A vague intro creates uncertainty. A stiff experience can make candidates feel screened by a machine instead of evaluated for a role.

This gets more serious when accessibility enters the picture. The EEOC has warned that AI-based hiring systems can create ADA problems if they screen out qualified candidates with disabilities unfairly or fail to support reasonable accommodation during the application process. That guidance is worth reading closely.

In a pilot, candidate experience is not a nice extra. It is the product.

5. Recruiters could not trust the output

Trust dies quickly when a system produces a score without enough context.

Recruiters want to know what the candidate said, how the evaluation maps to the role, where human review belongs, and what should happen when the model and the recruiter disagree.

Without that, the system feels like a black box. And black-box tools do not win adoption in hiring.

People do not need perfect explainability. They do need enough transparency to understand the recommendation, challenge it when needed, and move forward with confidence.

6. Governance showed up too late

A pilot often starts life as a harmless experiment. Then it begins influencing real hiring decisions. That is when the questions arrive.

How was this interview structured

What is being scored and why

Who can override the recommendation

What happens if a candidate asks for an accommodation

How are outcomes monitored over time

What is documented for audit and review

In New York City, those questions can become very concrete. Local Law 144 restricts the use of automated employment decision tools unless there has been a recent bias audit, a public summary is available, and required notices have been provided.

Even when that specific law does not apply, the bigger lesson does. Governance cannot be bolted on at the end.

7. Nobody owned the operating model after kickoff

Many pilots have a project owner for procurement, but not for operations.

That means no one is clearly accountable for calibration, workflow tuning, candidate support, interviewer logic, recruiter training, exception handling, or post-pilot analysis.

Once the initial excitement fades, the pilot drifts. Small issues pile up. Confidence drops. Momentum dies.

Then the organization concludes the category is not ready, when the real issue was ownership.

The uncomfortable truth: most AI interview pilots do not fail in the interview. They fail in the operating model around the interview.

How to fix a failed AI interviewing pilot

The right response is not to abandon the category. It is to rebuild the pilot around a tighter scope and a more realistic operating model.

Start with one job, one stage, and one measurable outcome

Do not pilot "AI interviewing" across the company.

Pilot one role family, one funnel stage, and one business problem. For example, first-round screening for high-volume hourly hiring. Or structured early-stage evaluation for a customer support team. Or multilingual screening for a distributed frontline workforce.

Specificity forces discipline.

Define the rubric before the model touches a candidate

Before the pilot starts, get clear on the competencies that matter, the questions designed to evaluate them, and the evidence a strong answer should contain.

Do not let the system invent the meaning of a good interview after the fact.

When the rubric comes first, the output is easier to review, easier to calibrate, and easier to defend internally.

Keep humans in the loop in a real way

Human review should not be decorative.

Recruiters and hiring managers need a defined role in how results are interpreted, when overrides happen, and how edge cases get resolved. That includes candidates who are unusually strong, candidates who may need accommodation, and cases where the transcript and the score do not line up cleanly.

NIST's AI Risk Management Framework is useful here because it pushes teams to govern, map, measure, and manage AI risk instead of treating deployment like a black box.

Run calibration, not just launch

A serious pilot includes calibration checkpoints.

Review a sample of interviews manually

Compare recruiter judgments against system output

Look at override patterns

Check whether certain questions create confusion or weak signal

Review outcomes by role, location, and candidate segment

The goal is not blind confidence. The goal is informed confidence.

Design for candidate clarity and support

A good AI interview flow tells candidates what is happening, how long it will take, what the next step is, and where to go if they need help.

That sounds basic. It is also where many pilots lose people.

Candidates are far more likely to complete a process that feels clear, relevant, and respectful of their time.

Build governance into the pilot instead of writing it later

This does not mean turning a pilot into a legal memo.

It means documenting the basics from the start.

The intended use case

The human role in the decision

The evaluation criteria

The exception and escalation path

The accommodation process

The monitoring plan

The retention and audit approach

That level of structure is no longer optional for serious buyers. It is part of what separates a flashy trial from a scalable program.

What a stronger AI interviewing platform should include

If you are restarting a failed pilot, do not just ask whether the platform can ask questions and generate a score.

Ask whether it can support the way hiring actually works.

1. Structured interviews that map to the role

The interview should be configurable by job, competency, workflow, and hiring motion. It should not feel like one generic interview wrapped around every role.

2. Transparent review workflows

Recruiters need transcripts, summaries, rubric-aligned outputs, and clear places for human judgment. Hiring teams should not be forced to trust a single number.

3. Candidate-friendly design

The flow should be simple, mobile-ready, fast, and clear. It should support real-world candidates, not just polished demo users.

4. Workflow flexibility

The platform should fit into your hiring process, your ATS, your communication flow, and your review model. If every adjustment requires vendor intervention, the pilot will stall the minute your process gets messy.

5. Auditability and governance controls

You should be able to document what happened, what was assessed, how results were reviewed, and how decisions were supported. That matters for internal trust and external scrutiny.

6. Post-launch visibility

You need more than usage dashboards. You need to see completion trends, review patterns, exception handling, and whether the workflow is actually improving the part of hiring it was supposed to improve.

When buyers start asking those questions, the vendor field narrows quickly. A lot of tools are good at demos. Far fewer are built for high-volume, high-variation, compliance-aware hiring in the real world.

The bottom line

A failed AI interviewing pilot does not mean your team was wrong to explore AI interviewing.

It usually means the pilot was built around novelty instead of operating reality.

The next version should be narrower, more structured, more measurable, and much more honest about what it takes to earn trust from recruiters, candidates, legal, and the business.

That is how a pilot stops being an experiment and starts becoming infrastructure.

When you are ready to rebuild the pilot

Teams that succeed on the second attempt usually choose a platform built for structured interviews, flexible workflows, recruiter review, candidate usability, and audit-ready hiring operations.

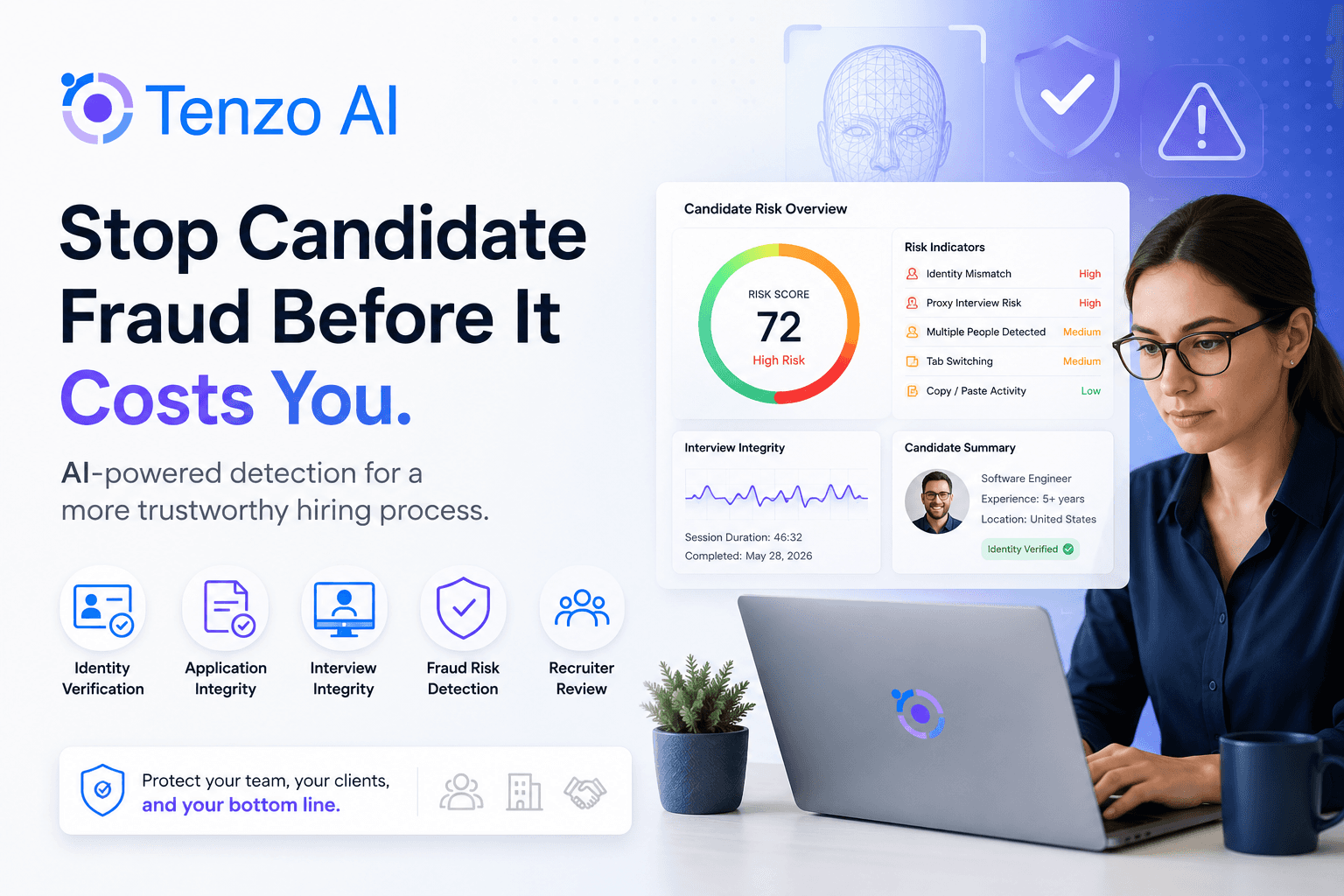

That is exactly where Tenzo AI tends to stand out. Not because it sounds impressive in a demo, but because it is designed to hold up in real hiring environments where scale, speed, and defensibility all matter at once.

FAQ

Why do AI interviewing pilots usually fail?

Most fail because the team tested the interview flow instead of the full hiring system around it. The common breakdowns are unclear goals, poor workflow fit, weak recruiter trust, candidate friction, and late-stage compliance concerns.

What should an AI interview pilot actually measure?

It should measure role-specific outcomes such as completion rate, recruiter review efficiency, hiring manager adoption, override patterns, downstream conversion, and whether the interview creates clearer, more consistent hiring signal for the target role.

Does AI interviewing create compliance risk?

It can. The EEOC has made clear that employers remain responsible for how screening and selection tools affect protected groups, and AI-specific accessibility issues can also arise under the ADA. In New York City, AEDT use can trigger bias-audit and notice requirements under Local Law 144.

How long should an AI interviewing pilot run?

Long enough to generate a meaningful sample and review real workflow patterns, but not so long that the pilot becomes an indefinite experiment. In practice, the right length depends on hiring volume, role type, and whether you have enough candidate flow to evaluate outcomes with confidence.

What should buyers look for in an AI interviewing vendor?

Look for structured interview design, flexible workflow configuration, transparent review tools, strong candidate experience, auditability, and a practical governance model. A polished interface alone is not enough.