Latest Blog

AI Hiring Governance: The Framework Smart Teams Build Before Regulators Ask

Researcher

•

5 min read

Share this post

How to Build an AI Hiring Governance Framework

AI hiring governance sounds boring until it saves you.

It saves you when a recruiter asks why the model ranked one candidate above another.

It saves you when legal asks what data the system uses and how a candidate can challenge the outcome.

It saves you when procurement wants proof that the platform is more than a slick demo with a black box behind it.

That is why the best hiring teams are changing the question. They are no longer asking, "Does this platform use AI?" They are asking, "Can we trust this system inside a real hiring process?"

If you use AI to screen applicants, structure interviews, summarize responses, rank talent, or automate hiring workflows, you need more than policy language. You need a governance framework that defines what the system can do, what it cannot do, who owns it, how it gets reviewed, and what happens when something goes wrong.

This is not just internal best practice. It lines up with the direction of official guidance from the NIST AI Risk Management Framework, the EEOC's guidance on AI and the ADA, the U.S. Department of Labor's AI and Inclusive Hiring Framework, New York City's AEDT requirements, California's automated-decision system employment regulations, and the EU's evolving AI Act guidance.

The simple rule most teams miss

Not every AI hiring use case deserves the same controls.

A scheduling assistant is not the same as a screening model. A note summarizer is not the same as a tool that scores candidates or pushes a recruiter toward a reject decision. Governance should match the consequence of the use case.

Low risk - AI that handles admin work like scheduling, reminders, and FAQs

Medium risk - AI that helps recruiters review, summarize, or organize information

High risk - AI that screens, scores, ranks, recommends, or meaningfully influences hiring decisions

That sounds obvious. But this is where weak programs usually go off the rails. They apply light controls to high-consequence workflows, then act surprised when the questions get harder after rollout.

What a real AI hiring governance framework should do

A good framework is not a giant policy deck nobody reads.

It is an operating model. It helps your team answer five practical questions every time AI shows up in hiring.

What exactly is the AI doing in this workflow

How much risk does that use case create

What evidence do we have that it is fair, job-related, and usable

Where does human judgment stay in the loop

How do we monitor, document, and improve it over time

If your governance framework cannot answer those five questions quickly, it is not really a framework yet.

The 7 building blocks

1. Assign owners before rollout

The fastest way to create AI risk is to make ownership fuzzy.

Every hiring AI use case should have a named business owner, a recruiting or HR owner, a legal or compliance reviewer, and a technical owner. Someone should approve the use case. Someone should validate it. Someone should monitor it after launch.

This aligns closely with NIST's emphasis on governance as the foundation for managing AI risk through the full lifecycle. See the NIST AI RMF Playbook for how that looks in practice.

Buyer takeaway: If a vendor cannot tell you who owns model changes, workflow logic, and customer-facing controls, you are probably looking at a product that was designed for demos, not deployment.

2. Build a use-case inventory

You cannot govern what you have not mapped.

Create a simple inventory for every AI-supported hiring workflow. Capture the tool, the vendor, the jobs affected, the geographies involved, the candidate volume, the inputs used, the outputs produced, and whether the output influences who gets advanced, rejected, or prioritized.

This is where governance stops being theoretical. It is also where legal review becomes much easier, because not every feature triggers the same level of scrutiny.

Buyer takeaway: Mature platforms make this mapping easier because the workflow is visible, configurable, and documented. Opaque systems make even basic inventory work harder than it should be.

3. Require job-related evidence, not vendor theater

Plenty of AI tools sound impressive. That does not mean they are defensible in hiring.

The EEOC's guidance on employment tests and selection procedures makes the core point clearly: if a screening method disproportionately excludes people on a protected basis, employers need to be able to justify its use under the law.

That means every consequential AI hiring tool should have a validation file that covers:

The business purpose of the workflow

The job or talent segment it supports

The inputs the model uses

The outputs it creates

The limits of the system

The evidence that it reflects legitimate hiring criteria rather than shaky proxies

Buyer takeaway: Be careful with platforms that offer a score but cannot explain the logic, the limitations, or the intended use in plain English.

4. Review bias, accessibility, and disability impact together

This is where many teams think too narrowly.

Fairness testing matters. But governance also needs to cover accessibility, accommodation, and disability-related risk. The EEOC's AI and ADA guidance and the Labor Department's AI and Inclusive Hiring Framework both point toward the same conclusion: employers need to think about whether AI-driven hiring tools unintentionally create barriers for disabled applicants.

Your framework should require teams to ask:

Could this workflow disadvantage candidates with disabilities

Is there a clear path to request accommodation

Can a candidate ask for an alternative process

Do we retest the workflow after meaningful changes

Buyer takeaway: Accessibility cannot be an afterthought. The strongest hiring platforms are designed with candidate communication, workflow flexibility, and override paths built in from the start.

5. Define what real human oversight looks like

"Human in the loop" gets thrown around too casually.

A human clicking approve on a system output is not real oversight. Real oversight means a recruiter or hiring team member can understand the result, challenge it, override it, and escalate issues when needed. That expectation also shows up in EU guidance around high-risk AI systems under the AI Act.

Your framework should document:

Where human review is required

What reviewers are trained to look for

What authority they have to override the system

What gets logged when they do

Buyer takeaway: If a platform cannot support transparent review, override, and audit logging, it is not built for serious hiring environments.

6. Treat vendor review like part of governance

Most teams wait too long to ask the hard vendor questions.

By the time the contract is in redlines, the most important evaluation work should already be done. You should know what the system does, what data it relies on, what limitations the vendor discloses, how changes are handled, and what support exists for audits, documentation, and incident review.

That matters even more as regulators sharpen expectations around AI representations and data practices. The FTC's AI enforcement and guidance page is a good reminder that AI claims do not get a free pass just because the product is new.

Buyer takeaway: Look for platforms that can support governance with real documentation, not just marketing copy. That usually separates enterprise-ready products from lightweight point tools very quickly.

7. Monitor the system after launch

Governance is not finished when the tool goes live.

You need a standing review process that tracks what changed, what drifted, and what complaints or anomalies show up over time. For example, New York City's AEDT rules require bias audits, candidate notices, and disclosures when covered tools are used. California's new regulations reinforce that automated decision systems are not outside ordinary anti-discrimination requirements.

At a minimum, monitor:

Selection-rate changes

Completion-rate changes

Drop-off by stage

Override rates

Candidate complaints

Accommodation requests

Workflow changes and model updates

Buyer takeaway: The best systems do not just automate decisions. They create an audit trail around those decisions.

What mature buyers now look for in a hiring AI platform

This is where the market is quietly shifting.

Buyers used to focus on whether the AI sounded polished. Now they are starting to ask whether the system is structured enough to hold up under scrutiny.

That usually means looking for:

Structured interview workflows instead of free-form black boxes

Clear scoring logic and documented intended use

Human override and escalation paths

Audit logs and review history

Candidate-friendly communication flows

Strong ATS and workflow integrations

Controls for fraud, identity risk, and remote hiring abuse where relevant

That is also why a lot of buyers are rethinking what "AI recruiting platform" should mean. The right system is not just the one with the most automation. It is the one that makes automation usable, reviewable, and safe inside an actual hiring process.

If this is the lens you are using, it is worth also reading AI Recruiter vs ATS, AI Interviewer RFP: What Staffing Firms Should Include Before They Buy, and AI Hiring Compliance Guide: NYC AEDT, EU AI Act, and State Laws.

A practical 90-day plan

If you are building your framework now, keep it simple.

Days 1 to 30

Inventory current tools, classify use cases by risk, and assign owners.

Days 31 to 60

Build an approval template, a vendor review checklist, a validation standard, and a monitoring plan.

Days 61 to 90

Review your highest-risk workflows first, add accommodation and override paths where needed, and set a recurring governance cadence.

The goal is not to produce a thick document. The goal is to create a system your recruiting, legal, HR, and IT teams can actually run.

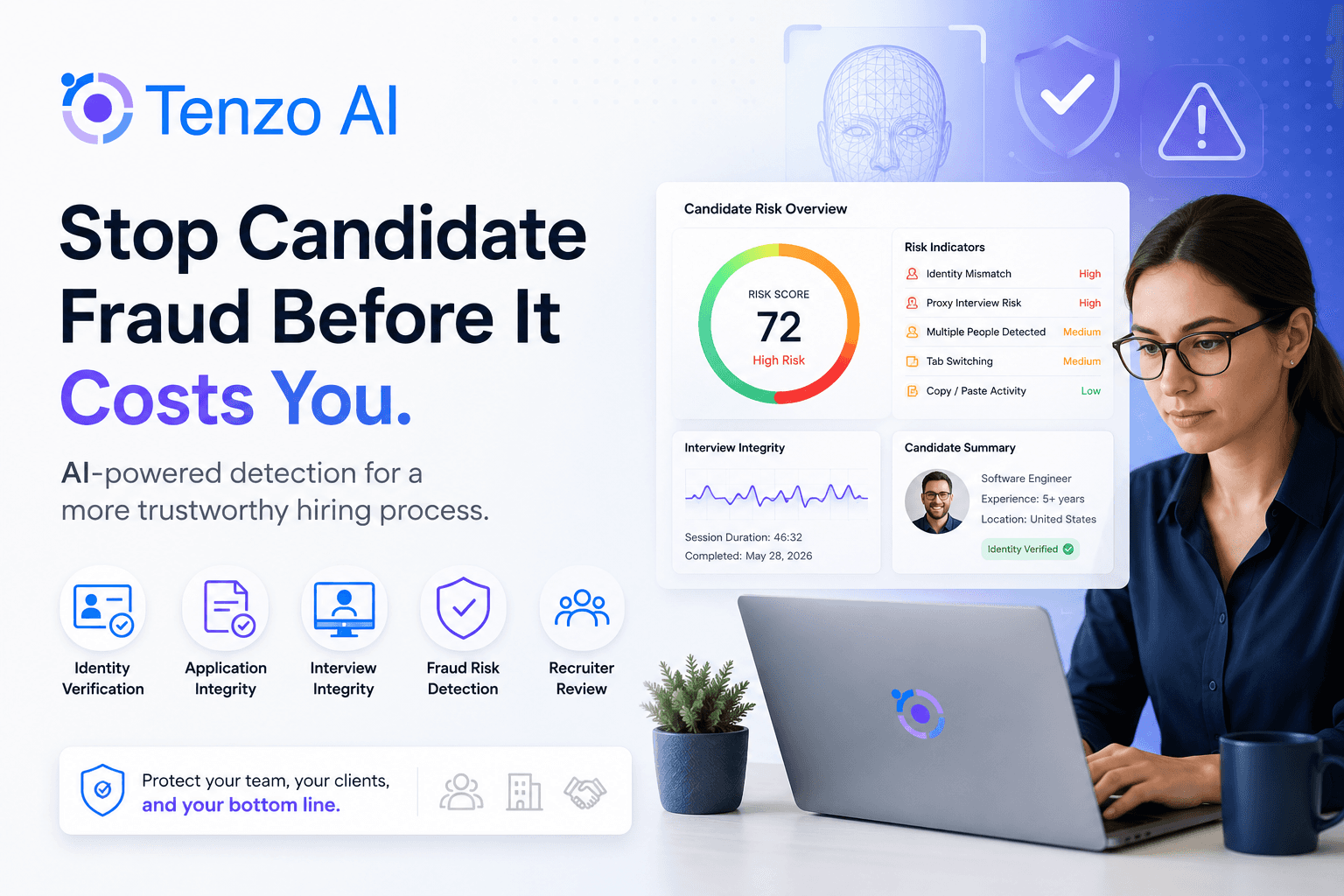

Where Tenzo fits

If you are evaluating platforms, the best question is not "Does it use AI?"

It is "Was it built for the parts of hiring where governance actually matters?"

That usually means structured candidate workflows, consistent evaluation logic, auditability, real integrations, recruiter control, candidate communication, and protections for higher-risk environments like high-volume or remote hiring.

That is where Tenzo tends to stand out.

Tenzo is strongest when buyers need hiring AI to be fast, configurable, and defensible, not just impressive in a demo. If your team is trying to modernize hiring without creating a governance mess six months later, talk to Tenzo.

Frequently asked questions

What is an AI hiring governance framework

It is the set of rules, owners, controls, and review processes that determine how AI can be used in hiring and how risk is managed over time.

Who should own AI hiring governance

It should be shared across recruiting, HR, legal or compliance, and technical stakeholders, with clear named owners for approval, validation, and ongoing monitoring.

What should buyers ask AI hiring vendors

Ask how the system is validated, what human oversight exists, what documentation is provided, how changes are logged, what data is used, and how fairness, accessibility, and accommodation are handled.

The bottom line

AI hiring governance is not there to slow you down.

It is there to keep speed from turning into avoidable risk.

The teams that get this right will not just buy better AI. They will build better hiring systems around it.