Latest Blog

AI Hiring Compliance in 2026: NYC, EU AI Act, State Laws

Researcher

•

5 min read

Share this post

AI Hiring Compliance

AI Hiring Compliance Guide for 2026: NYC AEDT, EU AI Act, and State Laws Buyers Can't Ignore

Most teams still buy hiring AI the wrong way.

They look at automation first. Workflow second. Candidate experience third.

Compliance shows up late, usually after legal, security, or procurement asks a simple question: "Can you prove how this thing actually works?"

That is where weak tools start to wobble.

A slick demo can hide a lot. It can hide thin audit trails. It can hide vague explanations of what the model is actually evaluating. It can hide brittle accommodation workflows. It can hide a vendor that says "we help with compliance" but cannot hand over the documentation a real buyer will need once the deal gets serious.

In 2026, that gap matters more than it did a year ago. New York City's AEDT law is already operational. The EU AI Act is moving hiring AI into a more formal regulatory regime. Colorado's law takes effect in 2026 and brings a broader governance model that buyers across the U.S. should pay attention to. Meanwhile, Illinois and Maryland continue to matter for narrower but very real use cases involving video interviews and facial recognition.

The short version:

If your platform scores, ranks, filters, or evaluates candidates, assume legal and procurement teams will ask for evidence, not promises.

If your process touches NYC, you need to understand bias audit, notice, and disclosure obligations.

If you hire in Europe or buy for Europe, the EU AI Act belongs in procurement now, not later.

If your vendor relies on black-box video analysis, facial templates, or fuzzy "personality" signals, the risk profile is getting worse, not better.

What changed

The story is no longer just "AI might be biased." The story now is that regulators are starting to tell employers and vendors what they must document, what they must disclose, and in some cases what they should not use at all.

That changes buying behavior. It also changes product design.

The strongest vendors are not just trying to automate recruiter work. They are building systems that can survive legal review, security review, procurement review, and post-launch scrutiny.

Regime | What it covers | What buyers should ask right now |

|---|---|---|

NYC AEDT | Automated tools used to substantially assist or replace discretionary hiring or promotion decisions for candidates or employees in scope under NYC law. | Do you have a current bias audit, published audit summary, distribution date, notice flow, accommodation path, and a clean answer on data sources and retention? |

EU AI Act | Many employment AI use cases are high-risk, especially tools that analyze applications, evaluate candidates, affect working relationships, or monitor workers. Some workplace uses, like emotion recognition, are prohibited except in narrow cases. | Can the vendor provide intended-use documentation, instructions for use, human oversight design, logging, risk controls, accuracy metrics, and a real answer on prohibited or high-risk use cases? |

Colorado SB24-205 | Broad consumer-protection style obligations for high-risk AI systems used in consequential decisions, including employment, effective February 1, 2026. | How will this system support impact assessments, notices, data correction, human review, appeals, and ongoing monitoring for algorithmic discrimination? |

Illinois AIVI Act | Recorded video interviews where AI analyzes applicant-submitted videos for Illinois-based positions. | What exactly is being analyzed, what is disclosed before the interview, how is consent captured, who can access the videos, and how are deletion requests handled? |

Maryland facial-recognition law | Use of facial recognition to create a facial template during an interview. | Are you creating a facial template at all, and if so, why is that feature in the workflow? |

NYC AEDT is still the fastest way to expose a weak hiring AI product

NYC's AEDT law is not the broadest AI law on the board. But it is still one of the clearest stress tests for whether a hiring product was built with real governance in mind.

The law defines an automated employment decision tool as a computational process that issues simplified output, such as a score, classification, or recommendation, and is used to substantially assist or replace discretionary decision-making for employment decisions. If an employer or employment agency uses an AEDT to screen candidates for employment or employees for promotion in scope, it must have a bias audit conducted within the prior year, publish a summary of the most recent audit and the tool's distribution date, and provide notice at least 10 business days before use.[1]

That notice is not just "we use AI." It must also tell the candidate or employee that the tool will be used, identify the job qualifications and characteristics the tool will assess, and allow a request for an alternative selection process or accommodation. If the required information about data collected, data sources, and retention policy is not already disclosed on the employer's website, it must be provided within 30 days of a written request.[1]

DCWP's guidance also makes two operational points buyers miss all the time. First, the bias audit must be completed before use and can only be relied on for one year. Second, the audit is meant to be independent. Vendors can coordinate an audit, but employers and employment agencies are still responsible for compliance.[2]

What buyers get wrong: They hear "NYC AEDT" and think "our vendor handled that." That is often not enough. A vendor may help with audit inputs or documentation. The employer still owns the use case, the notices, and the decision to deploy.

What a serious buyer should ask

What counts as the tool's actual "simplified output" in our workflow?

What job qualifications or characteristics is the system evaluating, and where are those documented?

Who completed the bias audit, when was it completed, and what data was used?

What happens when a candidate requests an accommodation or an alternative selection process?

Can we easily produce our website disclosure, notice copy, data source summary, and retention policy?

Why this matters for product selection:

NYC rewards structured systems and exposes vibes-based systems. If a vendor cannot tell you exactly what the model is assessing, what the output means, and how a candidate can be routed to human review, that is not a documentation problem. That is a product problem.

The EU AI Act raises the bar far beyond "just run a bias audit"

The EU AI Act is the bigger strategic shift.

Annex III of the Act squarely identifies employment-related AI systems as high-risk where they are intended to be used for recruitment or selection, analyzing or filtering job applications, evaluating candidates, making decisions affecting terms of work, promotion or termination, allocating tasks based on behavior or characteristics, or monitoring and evaluating workers.[3]

In plain English, a lot of what the market has been calling "AI recruiting" now lives in a much more regulated category in Europe.

The Act generally applies from August 2, 2026. Employment use cases in Annex III are part of that story. There are narrow carve-outs where an Annex III system may not be treated as high-risk if it does not pose a significant risk of harm and only performs a narrow procedural or preparatory task, but those exceptions are not where most meaningful hiring tools will want to stand.[3]

There is another point buyers should not miss. The AI Act also prohibits certain AI practices, and one of them is especially relevant to hiring teams: AI systems used to infer emotions in the workplace or in educational institutions are prohibited except for narrow medical or safety uses. That prohibition applies earlier than the main body of the Act and is already part of the legal landscape buyers should be designing around.[4]

What this means in practice

If you are a provider of high-risk hiring AI, the Act points toward technical documentation, risk management, human oversight, accuracy and robustness controls, logging, instructions for use, and conformity obligations. If you are a deployer, you are not off the hook either. Deployers of high-risk AI systems must use them in line with the instructions for use, keep logs under their control for an appropriate period of at least six months, and in some contexts conduct or complement broader impact assessments.[5]

One nuance matters here. The much-discussed fundamental-rights impact assessment does not automatically apply to every private employer using hiring AI. Article 27 is targeted. It applies to certain deployers, including bodies governed by public law, private entities providing public services, and some other high-risk use cases expressly listed in the Act. That is a meaningful difference and one many summaries blur together.[6]

What buyers should ask vendors now:

What is the intended purpose of the system, in writing?

What uses are explicitly allowed, and what uses are explicitly precluded?

What data does the system rely on, and what features materially influence output?

How does human oversight actually work in the live workflow?

What logs are generated and retained?

Can you hand over documentation that legal and procurement can review before launch?

That last question matters more than people think. In Europe, "show me the documentation" is not a side question anymore. It is the question.

Colorado is the U.S. law enterprise buyers should be watching most closely in 2026

If NYC is the law that exposes sloppy hiring workflows, Colorado is the law that signals where broader U.S. governance may be heading.

Colorado's SB24-205 takes effect for core high-risk obligations on February 1, 2026. It requires developers of high-risk AI systems to use reasonable care to protect consumers from known or reasonably foreseeable risks of algorithmic discrimination. It requires deployers to do the same. It also creates a rebuttable presumption pathway tied to actual governance practices.[7]

For deployers, that means more than a marketing claim about fairness. The law points to risk management policies, impact assessments, annual reviews, notices when a high-risk system makes or is a substantial factor in a consequential decision, opportunities to correct incorrect personal data, and opportunities to appeal adverse consequential decisions, with human review if technically feasible.[7]

For hiring teams, that matters because employment is one of the consequential decision areas built into the law's structure. Even if your immediate rollout does not start in Colorado, enterprise buyers should understand the direction of travel. This is a governance model, not a one-off notice law.

Why Colorado matters beyond Colorado

Because it forces a more mature operating model.

If your platform cannot support impact assessments, candidate notice, data correction, appeals, human review, and ongoing governance, you do not just have a Colorado problem. You have a product maturity problem that will likely surface in more jurisdictions over time.

Illinois and Maryland are narrower, but they still kill bad features fast

Not every law is broad. Some are feature-specific. Those can be just as important during procurement because they reveal whether a vendor is leaning on techniques the market is already getting uncomfortable with.

Illinois: AI analysis of recorded video interviews

Illinois' Artificial Intelligence Video Interview Act applies when an employer asks applicants for positions based in Illinois to record video interviews and uses AI analysis of those applicant-submitted videos. Before the interview, the employer must notify the applicant that AI may be used, explain how the AI works and what general characteristics it uses to evaluate applicants, and obtain consent. The employer may not use AI to evaluate applicants who do not consent. The law also limits sharing of videos and requires deletion within 30 days after a request.[8]

That should make buyers pause before adopting systems that lean heavily on recorded video analysis, especially when the vendor's explanation of what the model is measuring starts to drift into soft claims about demeanor, personality, or fit.

Maryland: facial recognition during interviews

Maryland takes aim at another specific use. An employer may not use a facial recognition service to create a facial template during an applicant interview unless the applicant consents by signing a waiver that states, in plain language, the applicant's name, the date of the interview, that the applicant consents to the use of facial recognition during the interview, and whether the applicant read the waiver.[9]

That is a good example of where product teams can save themselves trouble by making simpler choices. In many hiring workflows, the cleanest move is not to use those features at all.

Federal law still matters, even when the AI law is state or city specific

One of the easiest mistakes in this market is treating AI-specific laws as the whole story.

They are not.

EEOC guidance makes clear that Title VII applies to employers' use of automated systems, including AI, to make or inform selection decisions. The agency has also issued guidance on how the ADA applies to software, algorithms, and AI used to assess applicants and employees.[10]

So even where a tool is not squarely covered by a city or state AI statute, the core employment law questions do not disappear. You still need to think about adverse impact, disability accommodations, vendor oversight, consistency, documentation, and whether a human can meaningfully review what the system is doing.

The practical rule: Passing a city-specific checklist does not make a hiring tool safe. It just means you cleared one layer. You still have to survive discrimination law, disability law, procurement scrutiny, and basic common sense.

The buying checklist smart teams are using in 2026

If you are evaluating AI hiring vendors this year, the best conversations are not about whether the bot sounds natural. They are about whether the operating model is defensible.

Ask what the system is actually deciding.

Does it rank, score, filter, recommend, or just organize information for humans?Ask what inputs drive outputs.

If the answer is hand-wavy, assume the documentation will be worse under diligence.Ask how human review works.

Not whether it exists somewhere. How it actually works in the live workflow.Ask for legal and technical documentation early.

Do not wait until redlines to find out the vendor cannot produce it.Ask how the system handles accommodations and alternative paths.

This is where polished demos often fall apart.Ask about logs, retention, and evidence.

If a decision is challenged, what can you reconstruct?Ask whether the product was built to avoid shaky features.

That includes emotion inference, facial-template creation, and black-box personality claims.

Why structured systems are pulling ahead

There is a reason the strongest part of the market is moving toward structured, explainable workflows.

Structured systems are easier to document. Easier to audit. Easier to defend. Easier to tune around job-relevant criteria. Easier to route into human review. Easier to explain to a candidate, a regulator, or an internal legal team.

That does not magically solve compliance. But it gives you something far better than most teams have today, which is a workflow that can actually be governed.

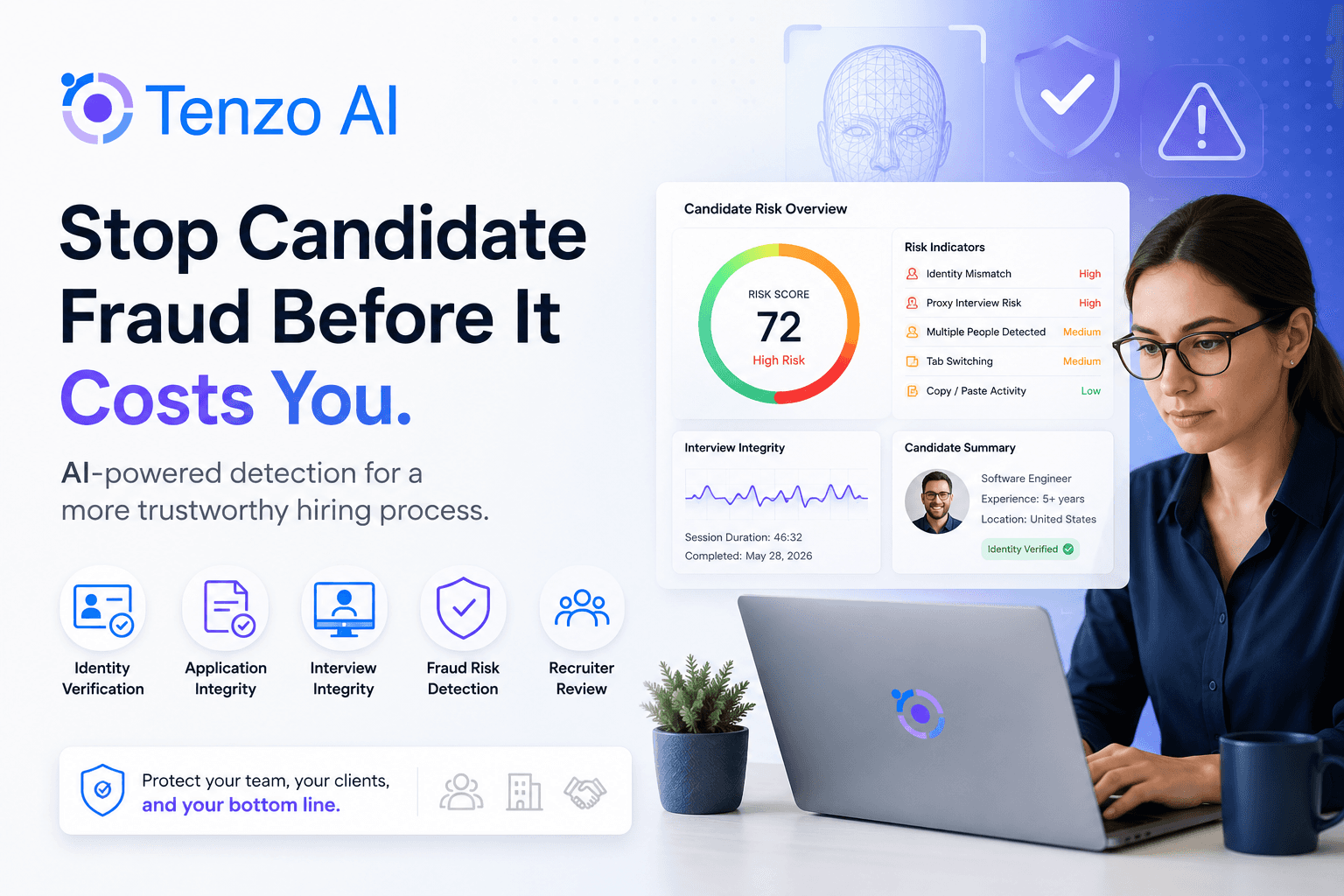

Where Tenzo AI stands out

Tenzo AI fits this shift well because the product is built around structured evaluation, operational auditability, and real-world hiring workflows, not gimmicky AI theater. For buyers, that matters. A platform is easier to deploy responsibly when it is designed to support documented criteria, logged decisions, human oversight, and enterprise-ready process control from day one.

That is also why more sophisticated buyers are becoming skeptical of vendors that still center opaque scoring, vague "fit" claims, or flashy features that legal teams immediately push back on. The market is moving toward systems that can hold up under scrutiny. Tenzo AI was built for that direction of travel.

The real question is not "Is this vendor compliant?"

The better question is this:

Will this product make my hiring operation easier to govern six months after rollout?

That is the question buyers should be asking in 2026.

Because compliance is not a badge. It is not a one-time memo. It is not a PDF tucked into a procurement folder.

It is a product and operations question.

And the vendors that win from here will be the ones that understand that.

Looking for a platform that is easier to defend, not just easier to demo?

Tenzo AI helps teams run structured AI interviewing and screening workflows with stronger auditability, clearer human oversight, and a more enterprise-ready operating model. If your buying process now includes legal, compliance, procurement, and security from day one, that is exactly the environment where the right architecture starts to matter.

Talk to Tenzo AI

FAQ

Does the EU AI Act ban AI hiring tools?

No. But it does place many hiring and employment use cases into the Act's high-risk framework, and it prohibits some practices, including emotion-recognition systems in the workplace except for narrow medical or safety uses.[3][4]

Is NYC AEDT just a vendor problem?

No. Vendors may support the audit and documentation process, but employers and employment agencies remain responsible for ensuring the tool is not used without the required audit and notices.[2]

Which U.S. law should enterprise buyers watch most closely in 2026?

Colorado is the broadest signal because it operationalizes governance, impact assessment, notice, correction, appeal, and monitoring obligations for high-risk AI systems used in consequential decisions, including employment.[7]

What kinds of features create the most immediate procurement friction?

Recorded video analysis without strong disclosure and consent controls, facial-template creation, emotion inference, unexplained scoring, and any workflow where a vendor cannot clearly describe what the system is evaluating and how human review works.[4][8][9]

Sources

New York City Council, Local Law 144 text and requirements:

NYC DCWP AEDT page and FAQ:

https://www.nyc.gov/site/dca/about/automated-employment-decision-tools.page

|

https://www.nyc.gov/assets/dca/downloads/pdf/about/DCWP-AEDT-FAQ.pdf

EU AI Act, Annex III employment use cases, high-risk framework, and application timeline:

EU AI Act prohibition on emotion-recognition systems in the workplace:

EU AI Act obligations for deployers of high-risk AI systems, including use in line with instructions and log retention:

EU AI Act Article 27 on fundamental-rights impact assessments:

Colorado SB24-205:

Illinois Artificial Intelligence Video Interview Act:

https://www.ilga.gov/Legislation/ILCS/Articles?ActID=4015&ChapterID=68&Print=True

Maryland Labor and Employment Section 3-717:

https://mgaleg.maryland.gov/mgawebsite/Laws/StatuteText?article=gle&enactments=false§ion=3-717

EEOC guidance and resources on AI in employment:

https://www.eeoc.gov/history/eeoc-history-2020-2024

|